Google Cloud PostgreSQL is a fully managed relational database service provided by Google Cloud Platform (GCP), hosting a PostgreSQL database with high availability, scalability, and security. Google Cloud takes care of the infrastructure management tasks, such as provisioning, backups, and replication, allowing you to focus on deploying, managing, and scaling your PostgreSQL database.

You can ingest data from your Google Cloud PostgreSQL database using Hevo Pipelines and replicate it to a Destination of your choice.

Prerequisites

Set up Logical Replication for Incremental Data

Hevo supports data replication from PostgreSQL servers using the pgoutput plugin (available on PostgreSQL version 10.0 and above). For this, Hevo identifies the incremental data from publications, which are defined to track changes generated by all or some database tables. A publication identifies the changes generated by the tables from the Write Ahead Logs (WALs) set at the logical level.

Perform the following steps to enable logical replication on your Google Cloud PostgreSQL server:

-

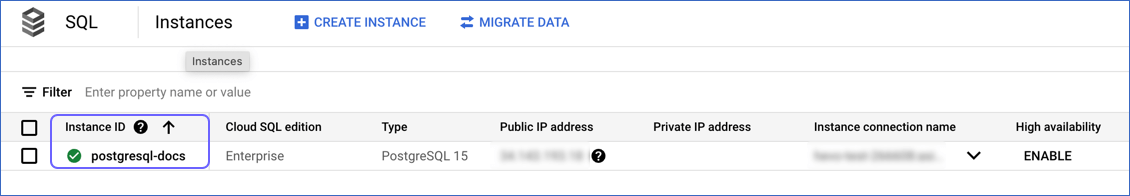

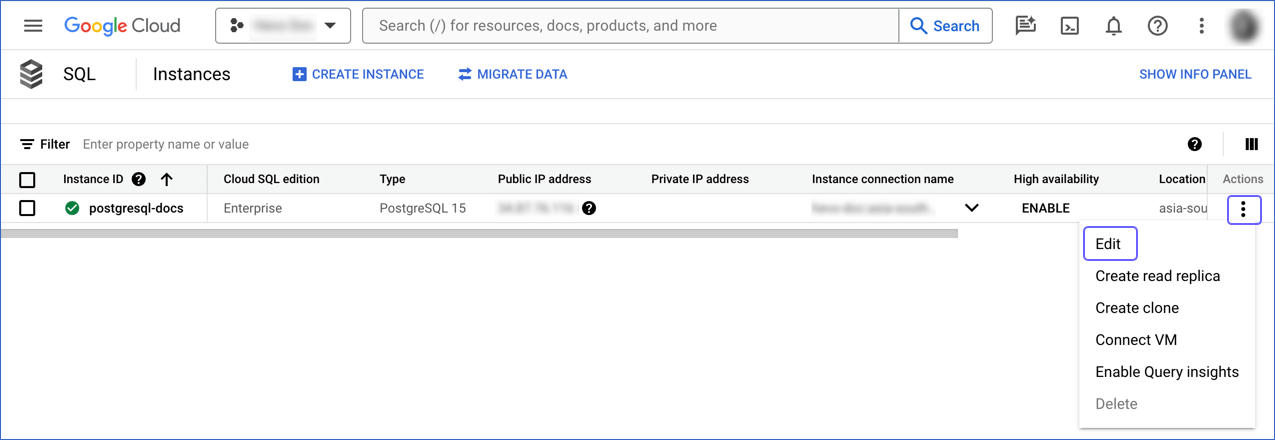

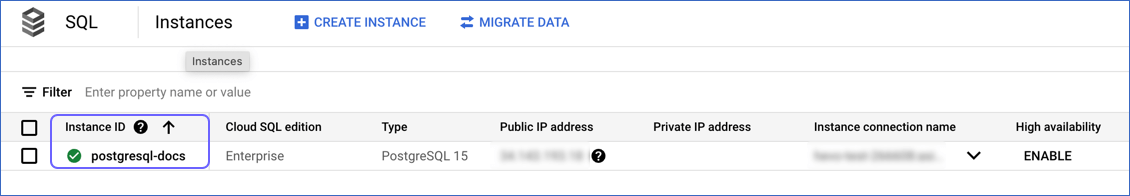

Log in to Google Cloud SQL to access your database instance.

-

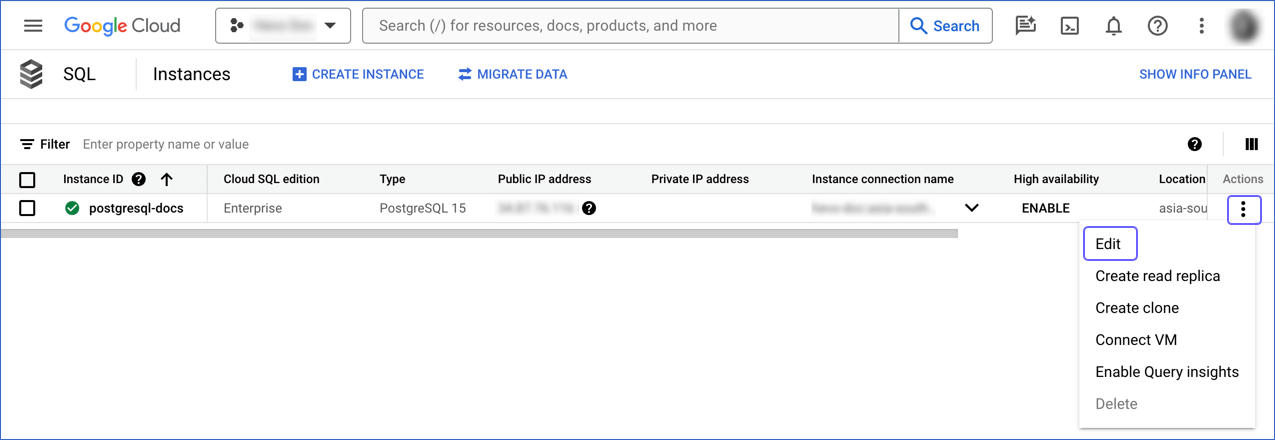

Click the More ( ) icon next to the PostgreSQL instance and click Edit.

) icon next to the PostgreSQL instance and click Edit.

-

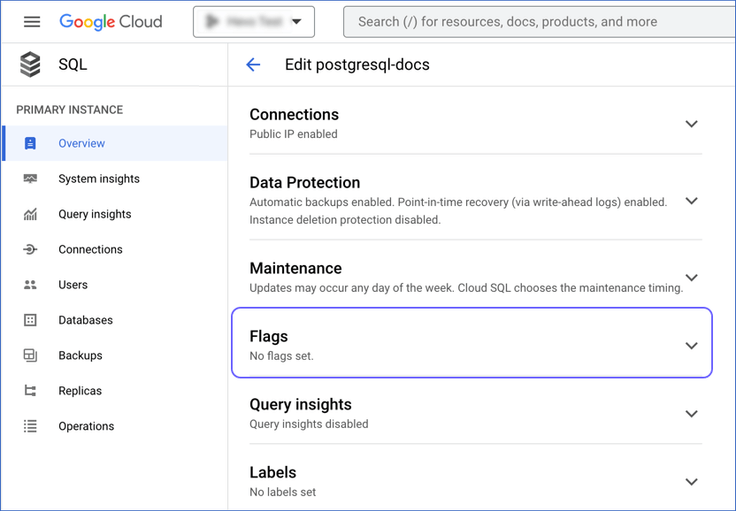

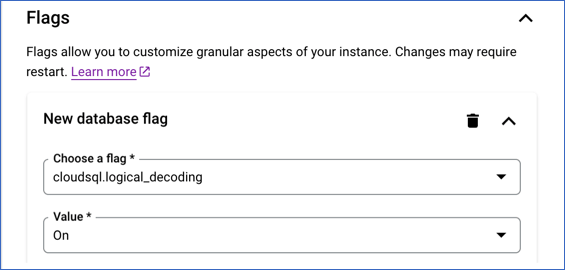

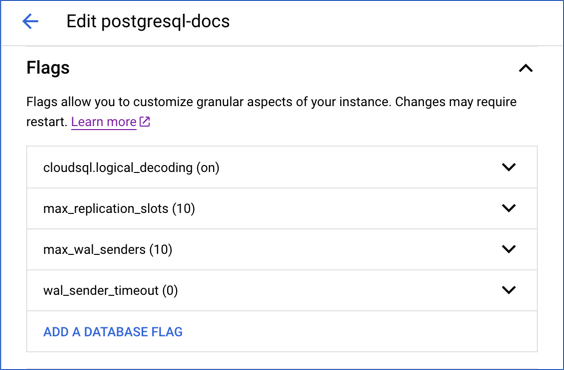

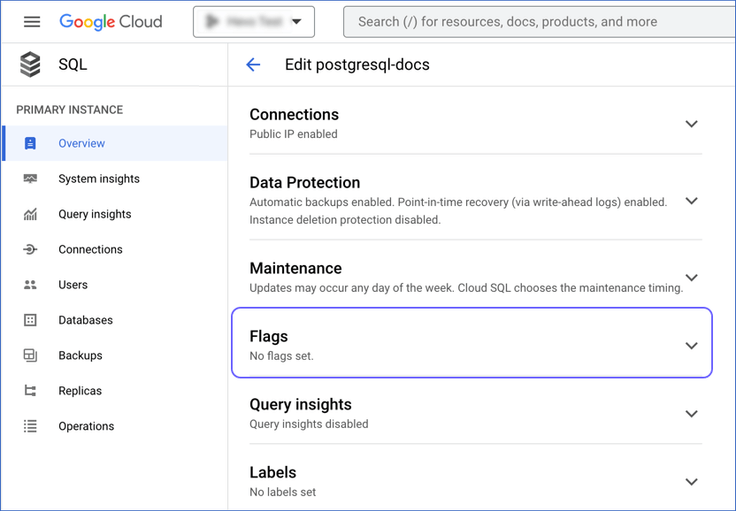

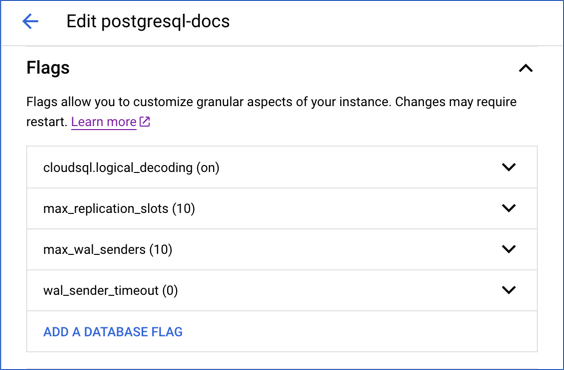

Scroll down to the Flags section.

-

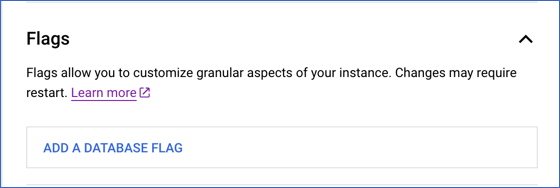

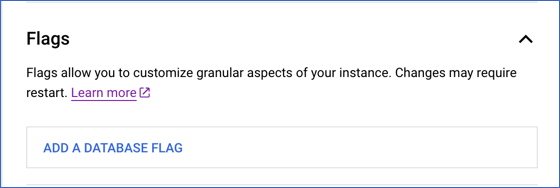

Click the drop-down next to Flags and click ADD A DATABASE FLAG.

-

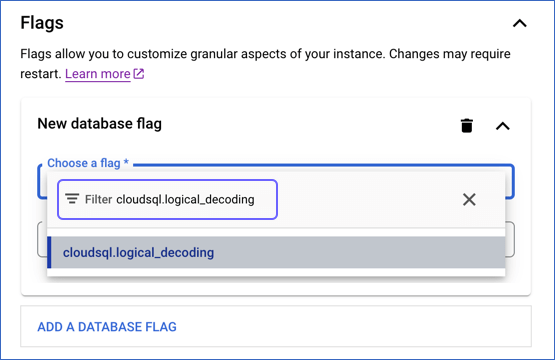

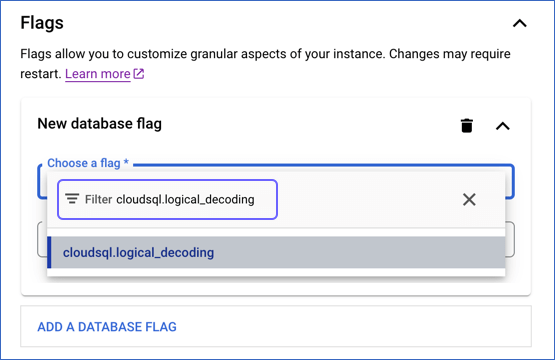

In the New database flag dialog window, click the arrow in the Choose a flag bar and type the flag name in the Filter bar.

-

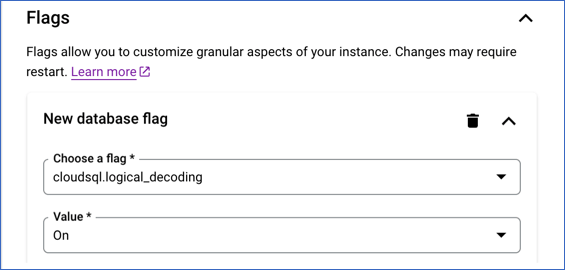

Click the flag name to select it. Select an appropriate value for the flag from the drop-down or by typing it.

-

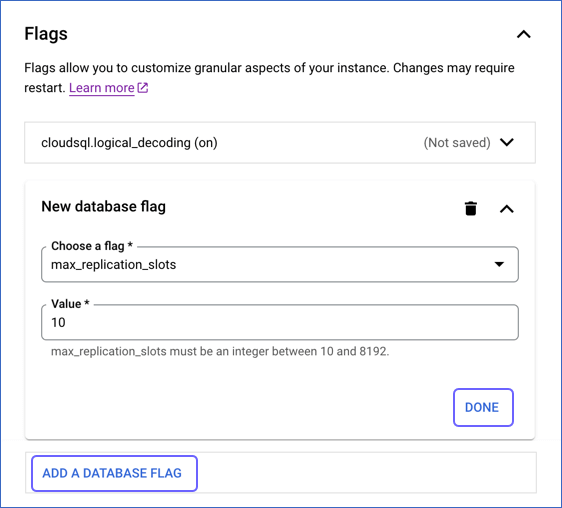

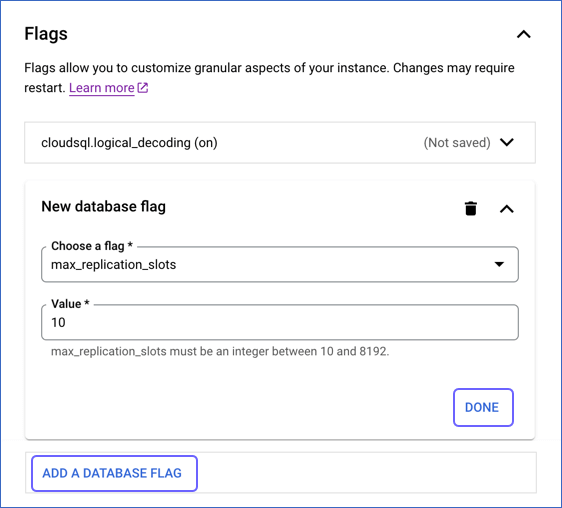

Click DONE and proceed to the next step to add all the required flags. Skip to step 9 if you have finished adding the flags.

-

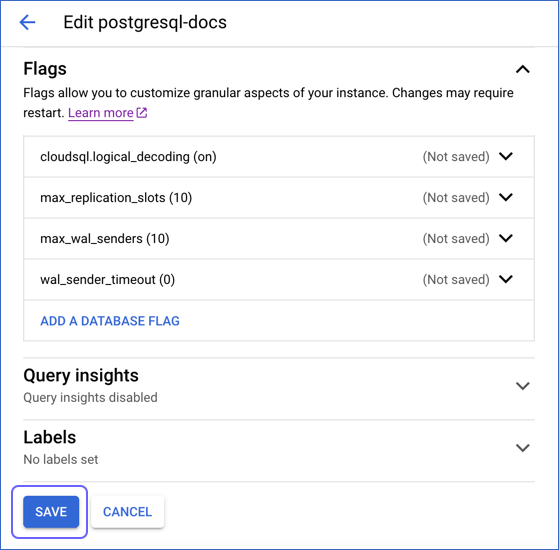

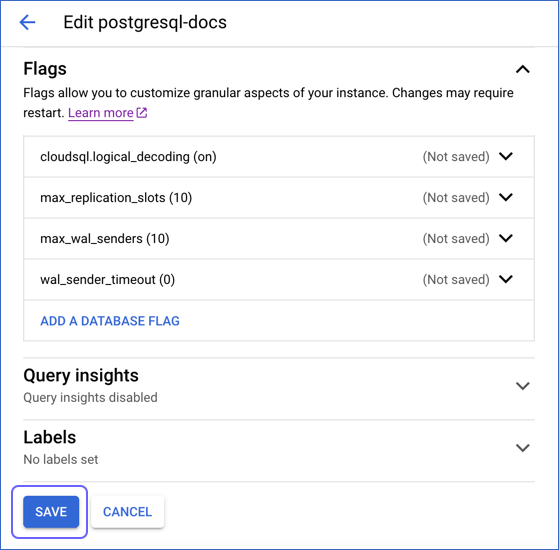

Click ADD A DATABASE FLAG, and then, repeat steps 5-7 to add the following flags and the specified value:

| Flag Name |

Value |

Description |

cloudsql.logical_decoding |

On |

The setting to enable or turn off logical replication. Default value: Off. |

max_replication_slots |

10 |

The number of clients that can connect to the server. Default value: 10. |

max_wal_senders |

10 |

The number of processes that can simultaneously transmit the WAL. Default value: 10. |

wal_sender_timeout |

0 |

The time after which PostgreSQL terminates the replication connections due to inactivity. A time value specified without units is assumed to be in milliseconds. Default value: 60 seconds.

You must set the value to 0 so that the connections are never terminated, and your Pipeline does not fail. |

-

Click SAVE.

-

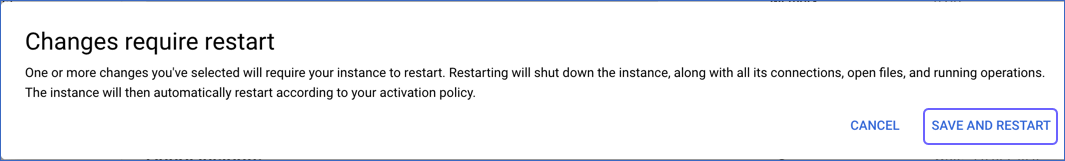

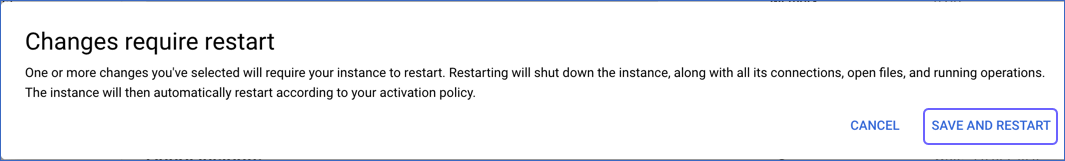

In the confirmation dialog, click SAVE AND RESTART.

Once the instance restarts, you can view the configured settings under the Flags section.

2. Create a publication for your database tables

In PostgreSQL 10 onwards, the data to be replicated is identified via publications. A publication must be defined on the primary database instance and can include some or all the database tables. The publication is a group of tables that tracks and determines the set of changes generated by those tables from the Write-Ahead Logs (WALs).

To define a publication:

Note: You must define a publication with the insert, update, and delete privileges.

-

Connect to your Google Cloud PostgreSQL database as a user having the cloudsqlsuperuser (Superuser) role with an SQL client such as DataGrip.

-

Run one of the following commands to create a publication:

Note: You can create multiple distinct publications whose names do not start with a number in a single database.

-

In PostgreSQL 10 and above without the publish_via_partition_root parameter:

Note: By default, the versions that support this parameter create a publication with publish_via_partition_root set to FALSE.

-

For one or more database tables:

CREATE PUBLICATION <publication_name> FOR TABLE <table_1>, <table_4>, <table_5>;

-

For all database tables:

Note: You can run this command only as a Superuser.

CREATE PUBLICATION <publication_name> FOR ALL TABLES;

-

In PostgreSQL 13 and above with the publish_via_partition_root parameter:

- For one or more database tables:

CREATE PUBLICATION <publication_name> FOR TABLE <table_1>, <table_4>, <table_5> WITH (publish_via_partition_root);

-

For all database tables:

Note: You can run this command only as a Superuser.

CREATE PUBLICATION <publication_name> FOR ALL TABLES WITH (publish_via_partition_root);

Read Handling Source Partitioned Tables for information on how this parameter affects data loading from partitioned tables.

-

(Optional) Run the following command to add table(s) to or remove them from a publication:

Note: You can modify a publication only if it is not defined on all tables and you have ownership rights on the table(s) being added or removed.

ALTER PUBLICATION <publication_name> ADD/DROP TABLE <table_name>;

When you alter a publication, you must refresh the schema for the changes to be visible in your Pipeline.

-

(Optional) Run the following command to create a publication on a column list:

Note: This feature is available in PostgreSQL versions 15 and higher.

CREATE PUBLICATION <columns_publication> FOR TABLE <table_name> (<column_name1>, <column_name2>, <column_name3>, <column_name4>,...);

-- Example to create a publication with three columns

CREATE PUBLICATION film_data_filtered FOR TABLE film (film_id, title, description);

Run the following command to alter a publication created on a column list:

ALTER PUBLICATION <columns_publication> SET TABLE <table_name> (<column_name1>, <column_name2>, ...);

-- Example to drop a column from the publication created above

ALTER PUBLICATION film_data_filtered SET TABLE film (film_id, title);

Note: Replace the placeholder values in the commands above with your own. For example, <publication_name> with hevo_publication.

Allowlist Hevo IP addresses for your region

You must add Hevo’s IP address for your region to the database IP allowlist, enabling Hevo to connect to your Google Cloud PostgreSQL database. To do this:

-

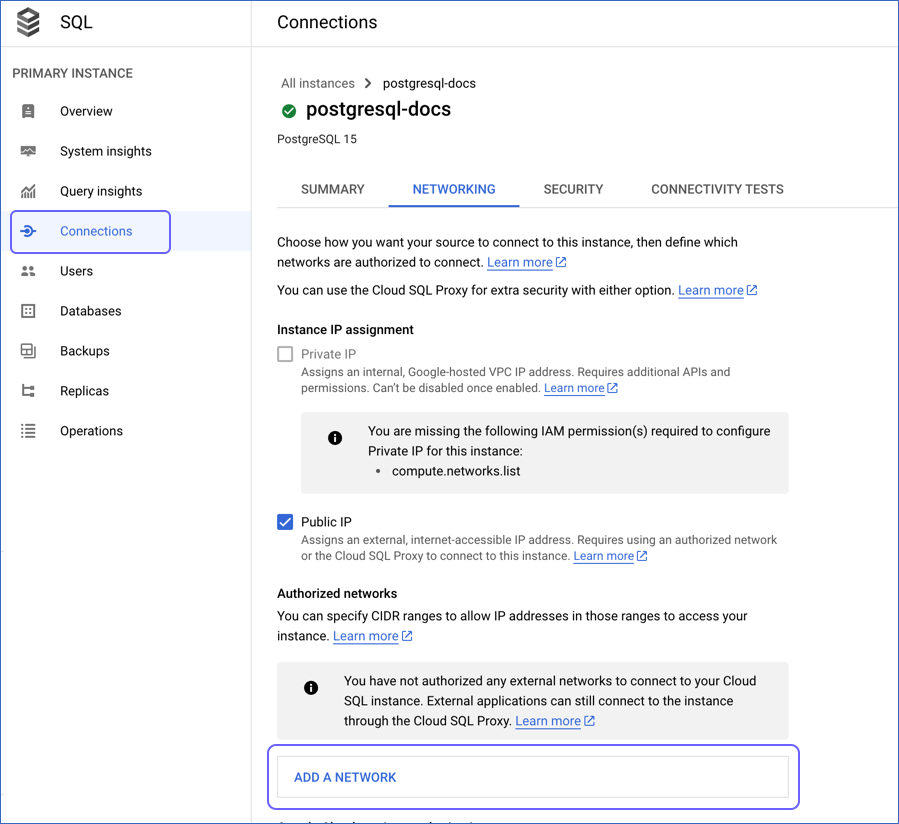

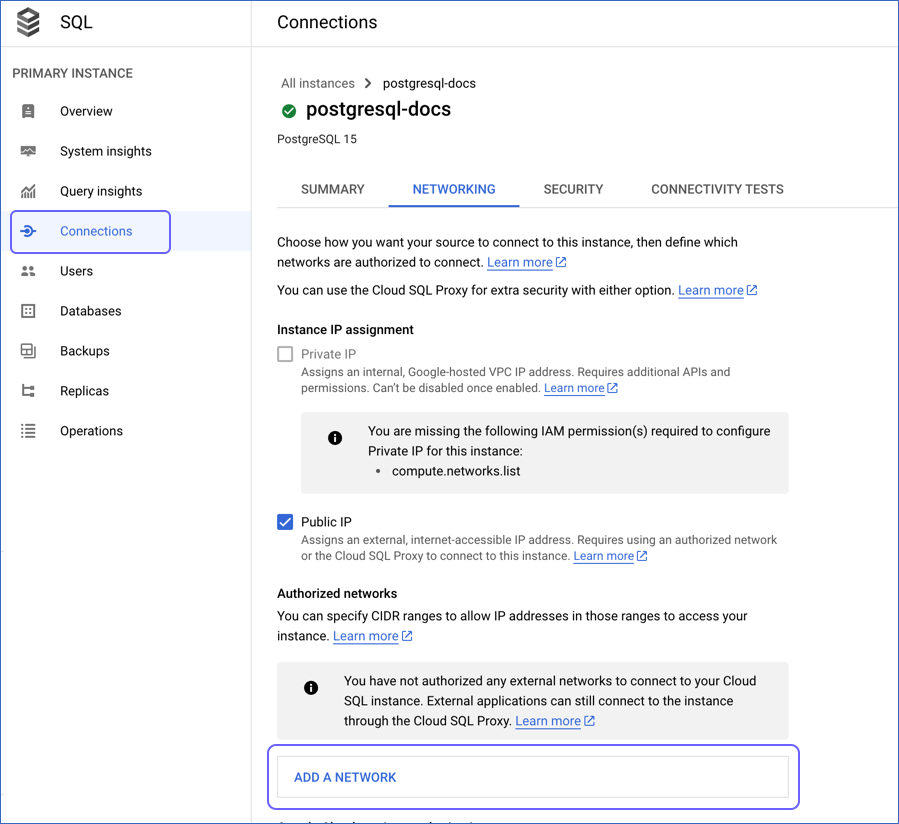

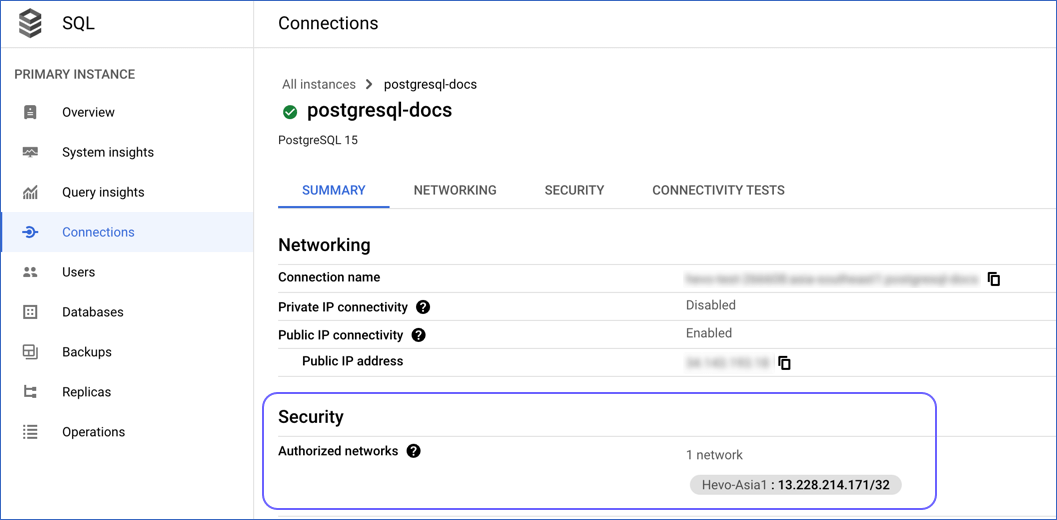

Log in to the Google Cloud SQL Console and click on your PostgreSQL instance ID.

-

In the left navigation pane of the <PostgreSQL Instance ID> page, click Connections.

-

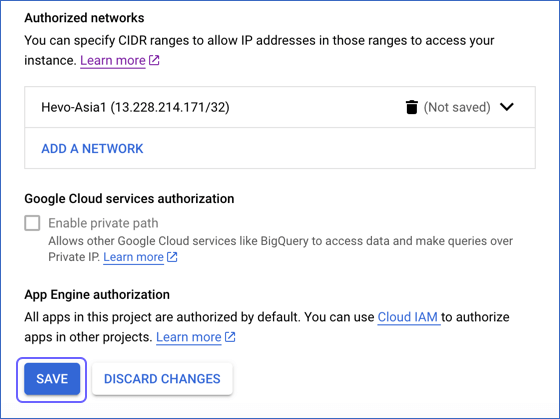

In the Connections pane, click the NETWORKING tab, and scroll down to the Authorized networks section.

-

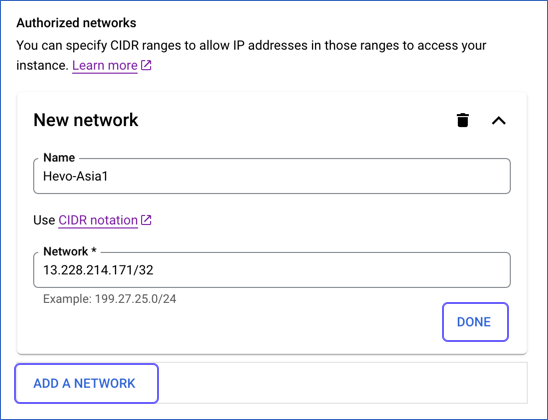

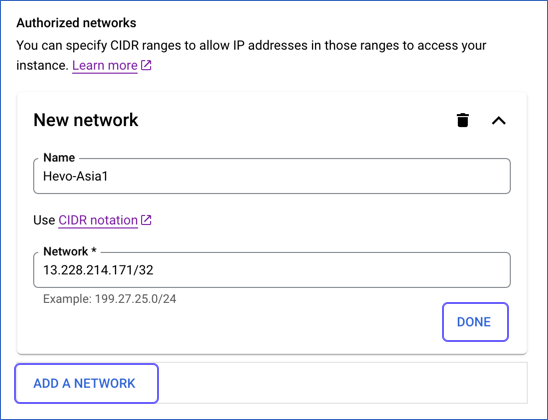

Click ADD A NETWORK, and in New Network, specify the following:

-

Click DONE, and then, repeat the step above for all the network addresses you want to add.

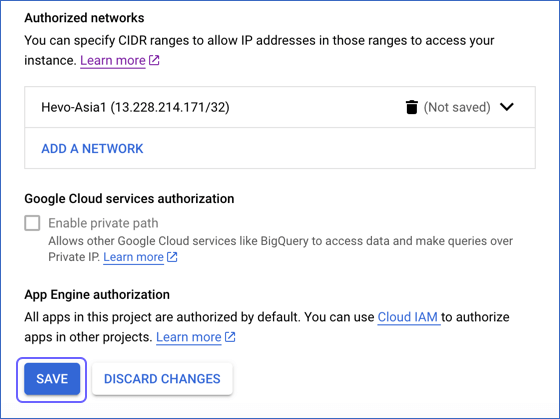

Note: If you have created a read replica for your PostgreSQL database, you must add the outgoing IP address of the replica. Read Get the outgoing IP address of a replica instance for the steps to do this.

-

Click Save.

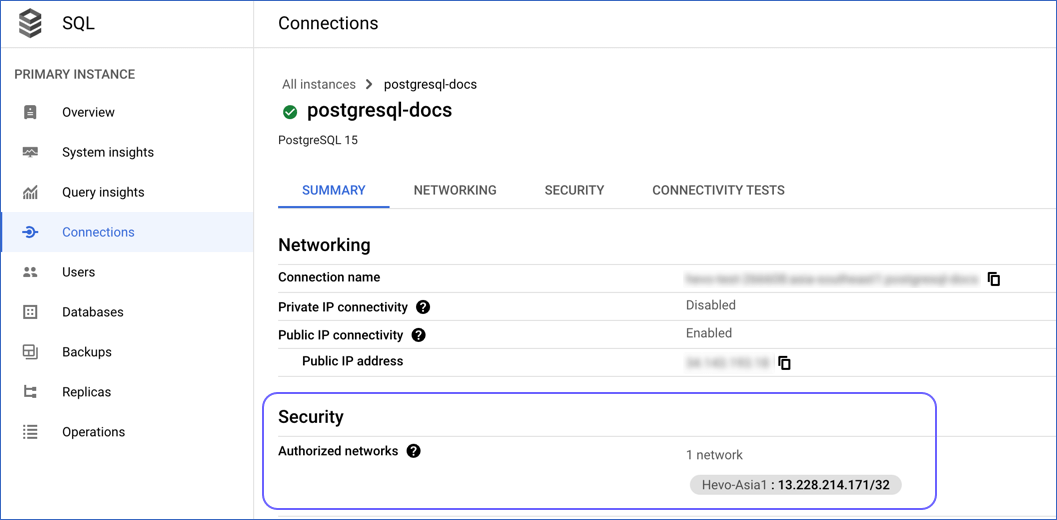

You can view the networks that you have authorized in the Summary tab of the Connections page under Security.

Create a Database User and Grant Privileges

1. Create a database user (Optional)

Perform the following steps to create a user in your Google Cloud PostgreSQL database:

-

Connect to your Google Cloud PostgreSQL database as a user having the cloudsqlsuperuser (Superuser) role with an SQL client such as DataGrip.

-

Run the following command to create a user in your database:

CREATE USER <database_username> WITH LOGIN PASSWORD '<password>';

Note: Replace the placeholder values in the command above with your own. For example, <database_username> with hevouser.

2. Grant privileges to the user

The following table lists the privileges that the database user for Hevo requires to connect to and ingest data from your PostgreSQL database:

| Privilege Name |

Allows Hevo to |

| CONNECT |

Connect to the specified database. |

| USAGE |

Access the objects in the specified schema. |

| SELECT |

Select rows from the database tables. |

| ALTER DEFAULT PRIVILEGES |

Access new tables created in the specified schema after Hevo has connected to the PostgreSQL database. |

| REPLICATION |

Access the WALs. |

| cloudsqlsuperuser |

Create logical replication slots. |

Perform the following steps to grant privileges to the database user connecting to the PostgreSQL database as follows:

-

Connect to your Google Cloud PostgreSQL database as a user having the cloudsqlsuperuser (Superuser) role with an SQL client such as DataGrip.

-

Run the following commands to grant privileges to your database user:

GRANT CONNECT ON DATABASE <database_name> TO <database_username>;

GRANT USAGE ON SCHEMA <schema_name> TO <database_username>;

GRANT SELECT ON ALL TABLES IN SCHEMA <schema_name> to <database_username>;

-

(Optional) Alter the schema to grant SELECT privileges on tables created in the future to your database user:

Note: Grant this privilege only if you want Hevo to replicate data from tables created in the schema after the Pipeline is created.

ALTER DEFAULT PRIVILEGES IN SCHEMA <schema_name> GRANT SELECT ON TABLES TO <database_username>;

-

Run the following command to grant your database user permission to read from the WALs:

ALTER USER <database_username> WITH REPLICATION;

-

Run the following command to allow your database user to create logical replication slots:

GRANT cloudsqlsuperuser TO <database_username>;

Note: Replace the placeholder values in the commands above with your own. For example, <database_username> with hevouser.

Retrieve the Database Hostname (Optional)

-

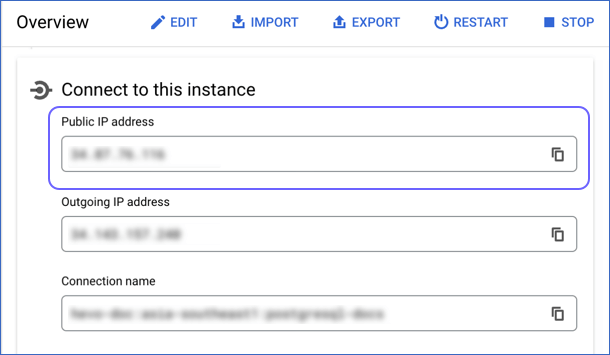

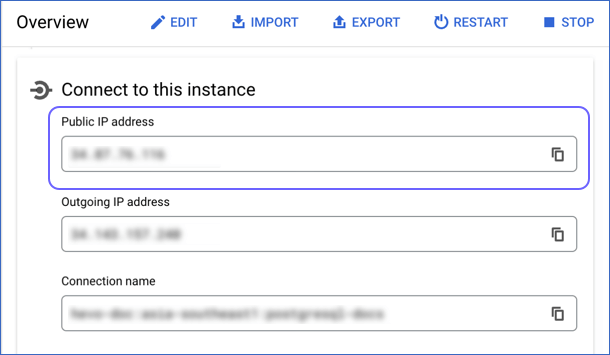

On the Google Cloud SQL page, click the Instance ID of your PostgreSQL database.

-

In the Overview page, scroll to the Connect to this instance section.

-

Copy the value in the Public IP address field. Use this value as your Database Host while configuring your Google Cloud PostgreSQL Source in Hevo.

Note: Google Cloud SQL always uses the default port number, 5432 for the PostgreSQL instance.

Configure Google Cloud PostgreSQL as a Source in your Pipeline

Perform the following steps to configure your Google Cloud PostgreSQL Source:

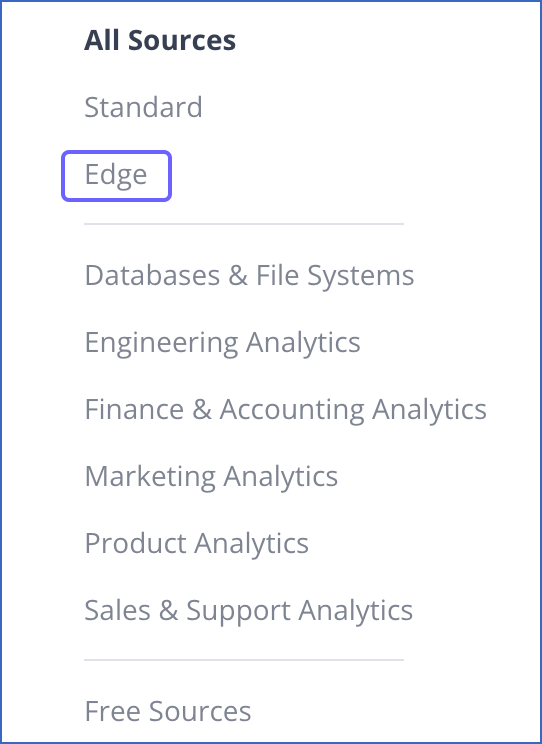

-

Click PIPELINES in the Navigation Bar.

-

Click + CREATE PIPELINE in the Pipelines List View.

-

On the Select Source Type page, under All Sources, click Edge, and then select Google Cloud PostgreSQL.

-

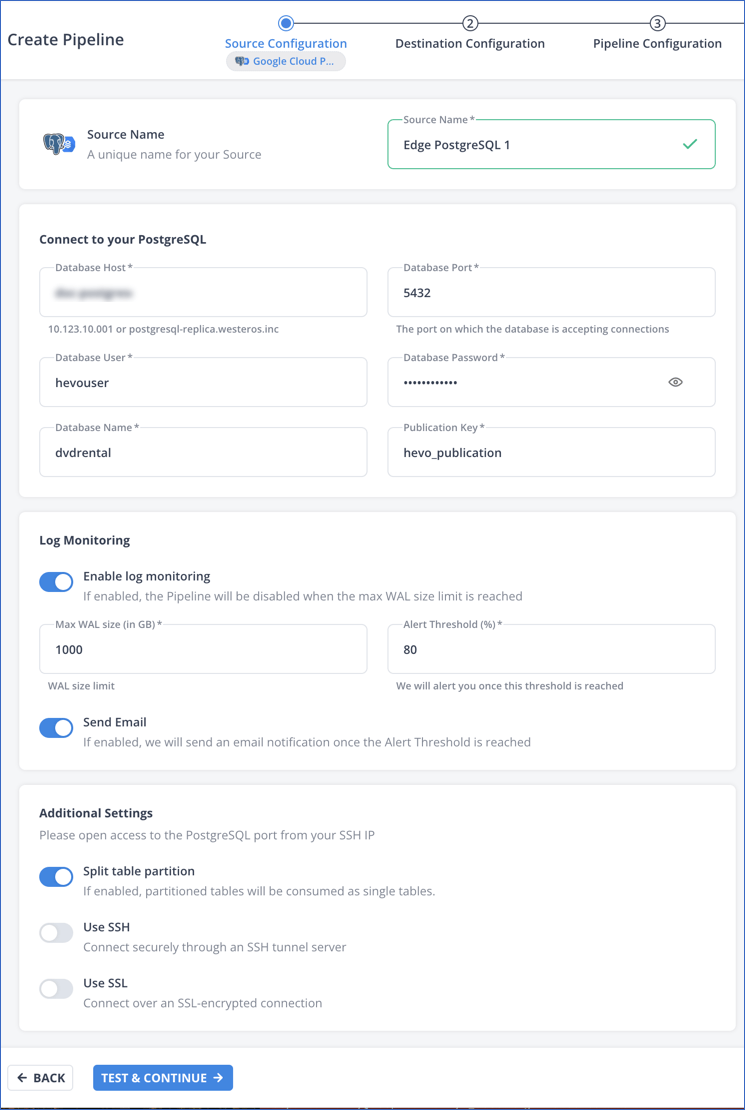

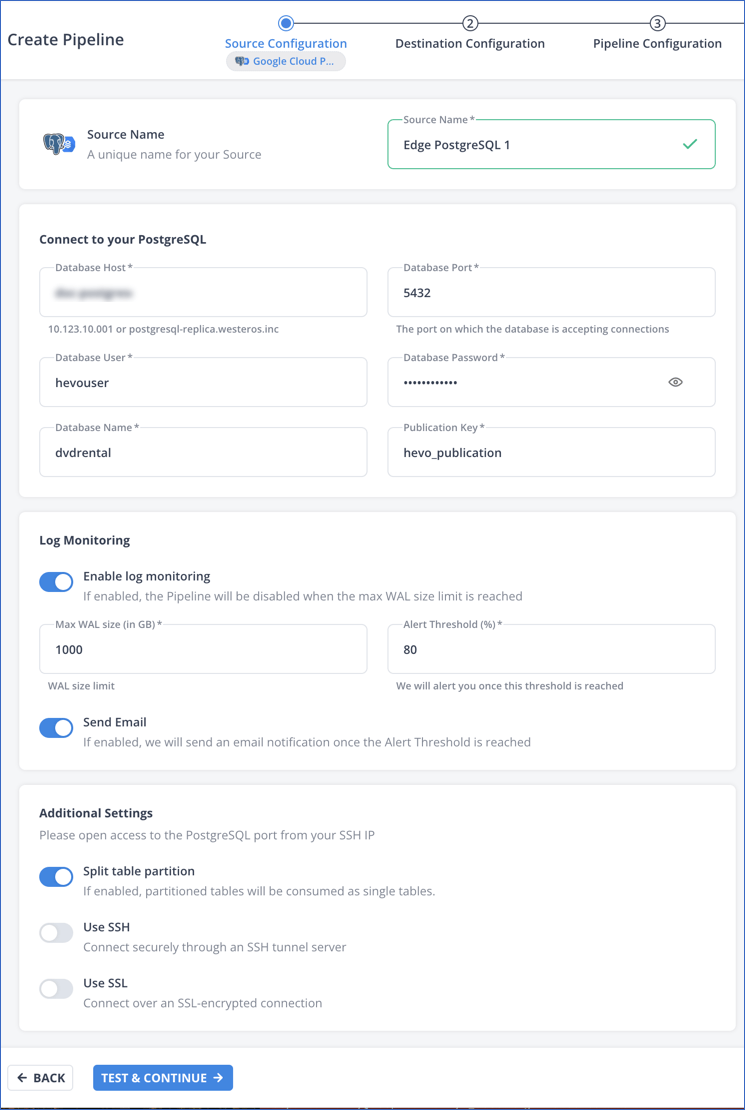

In the Google Cloud PostgreSQL screen, specify the following:

-

Source Name: A unique name for your Source, not exceeding 255 characters. For example, Edge PostgreSQL 1.

-

In the Connect to your PostgreSQL section:

-

Database Host: The Google Cloud PostgreSQL host’s IP address or DNS. This is the Public IP address that you obtained in the Retrieve the Database Hostname step of the Getting Started section.

-

Database Port: The port on which your Google Cloud PostgreSQL server listens for connections. Default value: 5432.

-

Database User: The user who has permission only to read data from your database tables. This user can be the one you created in the Create a database user step of the Getting Started section or an existing user. For example, hevouser.

-

Database Password: The password of your database user.

-

Database Name: The database from where you want to replicate data. For example, dvdrental.

-

Publication Key: The name of the publication in your database that tracks the changes in your database tables. This key can be the publication you created in the Create a publication for your database tables step of the Getting Started section or an existing publication.

-

Log Monitoring: Enable this option if you want Hevo to disable your Pipeline when the size of the WAL being monitored reaches the set maximum value. Specify the following:

-

Max WAL Size (in GB): The maximum allowable size of the Write-Ahead Logs that you want Hevo to monitor. Specify a number greater than 1.

-

Alert Threshold (%): The percentage limit for the WAL, whose size Hevo is monitoring. An alert is sent when this threshold is reached. Specify a value between 50 to 80. For example, if you set the Alert Threshold to 80, Hevo sends a notification when the WAL size is at 80% of the Max WAL Size specified above.

-

Send Email: Enable this option to send an email when the WAL size has reached the specified Alert Threshold percentage.

If this option is turned off, Hevo does not send an email alert.

-

Additional Settings

-

Split table partition: Enable this option to create the partitions of Source tables as individual objects at the Destination. Read Handling Source Partitioned Tables for information on how this option determines Hevo’s behavior for the specified publication key.

-

Use SSH: Enable this option to connect to Hevo using an SSH tunnel instead of directly connecting your PostgreSQL database host to Hevo. This provides an additional level of security to your database by not exposing your PostgreSQL setup to the public.

If this option is turned off, you must configure your Source to accept connections from Hevo’s IP addresses.

-

Use SSL: Enable this option to use an SSL-encrypted connection. Specify the following:

-

CA File: The file containing the SSL server certificate authority (CA).

-

Client Certificate: The client’s public key certificate file.

-

Client Key: The client’s private key file.

-

Click TEST & CONTINUE to test the connection to your Google Cloud PostgreSQL Source. Once the test is successful, you can proceed to set up your Destination.

Read the detailed Hevo documentation for the following related topics:

Data Type Mapping

Hevo maps the PostgreSQL Source data type internally to a unified data type, referred to as the Hevo Data Type, in the table below. This data type is used to represent the Source data from all supported data types in a lossless manner.

The following table lists the supported PostgreSQL data types and the corresponding Hevo data type to which they are mapped:

| PostgreSQL Data Type |

Hevo Data Type |

- INT_2

- SHORT

- SMALLINT

- SMALLSERIAL |

SHORT |

- BIT(1)

- BOOL |

BOOLEAN |

- BIT(M), M>1

- BYTEA

- VARBIT |

BYTEARRAY |

- INT_4

- INTEGER

- SERIAL |

INTEGER |

- BIGSERIAL

- INT_8

- OID |

LONG |

- FLOAT_4

- REAL |

FLOAT |

- DOUBLE_PRECISION

- FLOAT_8 |

DOUBLE |

- BOX

- BPCHAR

- CIDR

- CIRCLE

- CITEXT

- DATERANGE

- ENUM

- GEOMETRY

- GEOGRAPHY

- HSTORE

- INET

- INT_4_RANGE

- INT_8_RANGE

- INTERVAL

- LINE

- LINE SEGMENT

- LTREE

- MACADDR

- MACADDR_8

- NUMRANGE

- PATH

- POINT

- POLYGON

- TEXT

- TSRANGE

- TSTZRANGE

- UUID

- VARCHAR

- XML |

VARCHAR |

| - TIMESTAMPTZ |

TIMESTAMPTZ (Format: YYYY-MM-DDTHH:mm:ss.SSSSSSZ) |

- JSON

- JSONB

- POINT |

JSON |

| - DATE |

DATE |

| - TIME |

TIME |

| - TIMESTAMP |

TIMESTAMP |

- MONEY

- NUMERIC |

DECIMAL |

| - ARRAY |

JSON |

At this time, the following PostgreSQL data types are not supported by Hevo:

Note: If any of the Source objects contain data types that are not supported by Hevo, they are marked as unsupported during object configuration in the Pipeline.

Handling of Deletes

In a PostgreSQL database for which the WAL level is set to logical, Hevo uses the database logs for data replication. As a result, Hevo can track all operations, such as insert, update, or delete, that take place in the database. Hevo replicates delete actions in the database logs to the Destination table by setting the value of the metadata column, __hevo__marked_deleted to True.

Source Considerations

-

If you add a column with a default value to a table in PostgreSQL, entries with it are created in the WAL only for the rows that are added or updated after the column is added. As a result, in the case of log-based Pipelines, Hevo cannot capture the column value for the unchanged rows. To capture those values, you need to:

-

Any table included in a publication must have a replica identity configured. PostgreSQL uses it to track the UPDATE and DELETE operations. Hence, these operations are disallowed on tables without a replica identity. As a result, Hevo cannot track the updates or deletes for such tables.

By default, PostgreSQL picks the table’s primary key as the replica identity. If your table does not have a primary key, you must either define one or set the replica identity as FULL, which records the changes to all the columns in a row.

Limitations

-

Hevo supports the logical replication of partitioned tables only for PostgreSQL version 12 or higher.

-

Hevo does not support data replication from foreign tables, temporary tables, and views.

-

If your Source table has indexes (indices) and or constraints, you must recreate them in your Destination table, as Hevo does not replicate them. It only creates the existing primary keys.

-

Hevo does not set the __hevo__marked_deleted field to True for data deleted from the Source table using the TRUNCATE command. This action could result in a data mismatch between the Source and Destination tables.

-

You cannot select Source objects that Hevo marks as inaccessible for data ingestion during object configuration in the Pipeline. Following are some of the scenarios in which Hevo marks the Source objects as inaccessible:

-

The object is not included in the publication (key) specified while configuring the Source.

-

The publication is defined with a row filter expression. For such publications, only those rows for which the expression evaluates to FALSE are not published to the WAL. For example, suppose a publication is defined as follows:

CREATE PUBLICATION active_employees FOR TABLE employees WHERE (active IS TRUE);

In this case, as Hevo cannot determine the changes made in the employees object, it marks the object as inaccessible.

-

The publication specified in the Source configuration does not have the privileges to publish the changes from the UPDATE and DELETE operations. For example, suppose a publication is defined as follows:

CREATE PUBLICATION insert_only FOR TABLE employees WITH (publish = 'insert');

In this case, as Hevo cannot identify the new and updated data in the employees table, it marks the object as inaccessible.

See Also