Pipeline Jobs

On This Page

This feature is currently available for Early Access. Please contact your Hevo account executive or Support team to enable it. Alternatively, request for early access to try out one or more such features.

Note: This feature may not display accurate job statistics for Pipelines containing Python code-based or Drag-and-Drop Transformations.

A Job is an immutable set of tasks for all objects, child objects, and tables at the Source and Destination levels that are used to replicate your data. It provides you with both a high-level and a granular view of the entire data movement lifecycle from the Source to the Destination along with any associated metadata. Each job can be seen as one complete Pipeline run, encompassing all the stages of data replication: Ingestion → Transformation → Schema Mapping → Loading.

You can view the job details to know:

-

The timeliness of data replication.

-

The data volume correctness.

-

The Events being replicated at any stage in a Pipeline run (Job).

-

Errors logged in each Pipeline run.

These details can help you identify any data mismatches, latencies, and Event consumption spikes.

A job is created when you configure your Pipeline, based on the type of data that is to be ingested, for example, historical or incremental jobs. Each job is assigned a Job ID; you can use this ID to track the job details in your Audit Tables. For SaaS Sources and Pipelines with ingestion mode as Table, a job includes all the Source objects selected for ingestion and is run as per the defined Pipeline schedule. For log-based ingestion, Hevo reads the streaming logs every 5 minutes, however, the data is loaded every hour.

You can view the jobs for all the Pipelines created by your team or view the jobs for a specific Pipeline. Further, within each job, you can view the details of each object.

Note: If your Pipeline uses Python code-based or Drag-and-Drop Transformations to modify ingested Events, the displayed job details, such as status and number of objects processed, may not be accurate.

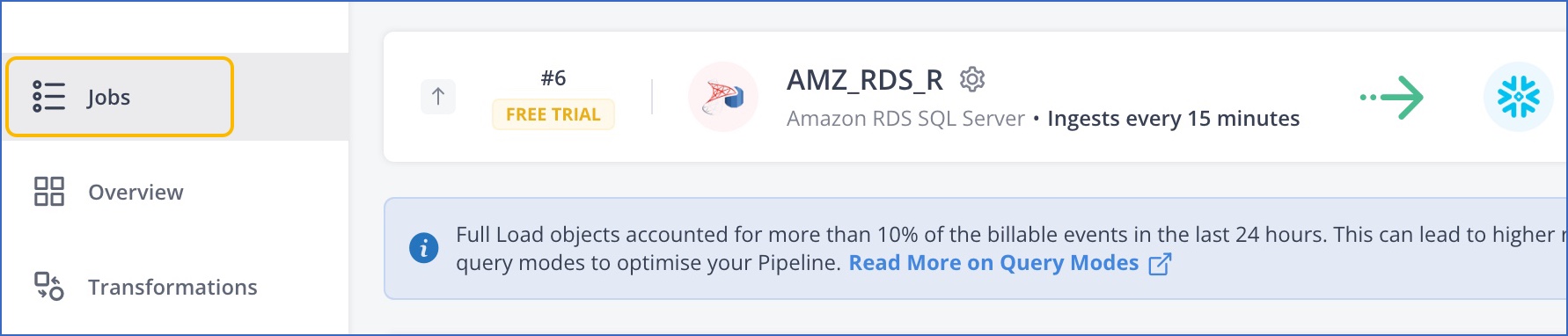

Accessing the Jobs List

Use the All Jobs tab on the Navigation Bar to view, track, and manage all your Pipeline jobs in one place.

Alternatively, use the Jobs tab on the Tools Bar of a Pipeline to view the list of jobs created for it.

The Jobs list displays the following summary information for a job:

| Column Name | Description |

|---|---|

| Mode | The job mode, or the nature of the data being ingested. This can be Historical, Incremental, or Refresher. |

| Pipeline | The name of the Pipeline along with the Source and Destination type. This detail is displayed only in the All Jobs view. |

| Started at | The time at which the job started. |

| Duration | The time for which the job ran. |

| Events - Ingested | The total number of Source Events ingested for the objects included in the job. |

| Events - Loaded | The number of Events loaded to the Destination for the objects included in the job. This count may not match the count of ingested Events in case there are any failures or any Transformations are applied. |

| Events - Failed | The number of Events that were not loaded to the Destination. For example, if the incoming Source data type or decimal precision is not supported by the Destination, the Events are sidelined. |

| Events - Billable | The number of billable Events in that job. For example, historical data is loaded for free while incremental data is billable. The count includes the Events across all objects in the job. |

| Status | Status of the job. This can be In-Progress, Completed, or Skipped. Refer to the Job and Object Statuses table for details. |

The sum of loaded and failed Events in a job equals the ingested Events. If Events are created or deleted using Transformations, you may find a count mismatch.

Job and Object Statuses

| Job Status | Description |

|---|---|

| In progress | The job is intialized and is currently running. |

| Completed | The job completed successfully and the ingested Events are loaded to the Destination. |

| Skipped | The job is skipped as there is no object to ingest or another job is currently in progress. |

If any object has Event failures during a job run, the job remains in In-Progress status while Hevo tries to replay the Events. If you find any object with failed Events in the job details, you can restart the object using the Restart Now or Run Now action. This creates a Historical job with just that object.

Viewing Job and Object Details

To view the details of a job, such as the list of objects processed by it and the information about each object:

-

Click the arrow at the right to view additional details for the job.

-

View the job details and the list of objects processed in it.

-

Optionally, click the drop-down next to an object to view the ingestion and loading details for it.

The object details section displays:

-

The ingestion and loading stages of data replication.

-

The details of each stage, for example, the start time and duration for which the task ran, the number of Events read vs ingested or loaded, and the count of failed Events if any.

-

The number of failed Events for each failure reason. Errors that are transient in nature are automatically resolved by Hevo and no action is required from you for these.

-

See Also

Revision History

Refer to the following table for the list of key updates made to this page:

| Date | Release | Description of Change |

|---|---|---|

| May-12-2025 | NA | Added a note to inform that Job Monitoring works best for Pipelines that do not transform Events. |

| Jan-15-2024 | 2.19.1 | New document. |