Shopify App

On This Page

From Release 2.33.1 onwards, Hevo uses version 2024-10 of the Shopify API to fetch your data. This upgrade impacts the following objects:

| Objects | Changes |

|---|---|

| Country | - Removal of country object. |

| Product | - Product object is removed and moved to GraphQL. - Removal of product, product_variant, and product_images objects. - Addition of product_v2, product_variants_v2, and product_media_list objects. |

| UsageCharge | - Removal of billing_on field. |

| Order | - Addition of current_quantity field to line_items object. - Addition of is_removed field to shipping_line object. |

| FulfillmentOrder | - Addition of include_order_reference_fields and include_financial_summaries fields. - Addition of presentedName field to DeliveryMethod object. |

| Balance Transactions | - Addition of adjustment_order_transactions and adjustment_reason fields. |

| Inventory Locations | - Removal of localized_country_name and localized_province_name fields. |

| Draft Order | - Addition of is_removed field to shipping_line object. |

| Abandoned Checkout | - Addition of is_removed field to shipping_line object. |

| Transaction | - Addition of amount_rounding field. |

To avoid any data loss, we recommend making the necessary adjustments to accommodate these changes. The upgrade process will be seamless, with no downtime for your Pipelines. However, if you need the historical data for product_v2, product_variants_v2, or any of the new fields, you can restart the historical load for the respective object.

Note: If the upgraded Pipeline does not show the products_v2 object or the expected new fields, it may be due to the absence of product data in the Source. The object is created only when data, either incremental or historical, is available. In such cases, you can restart the object to trigger a sync.

This change applies to all new and existing Pipelines created with this Source.

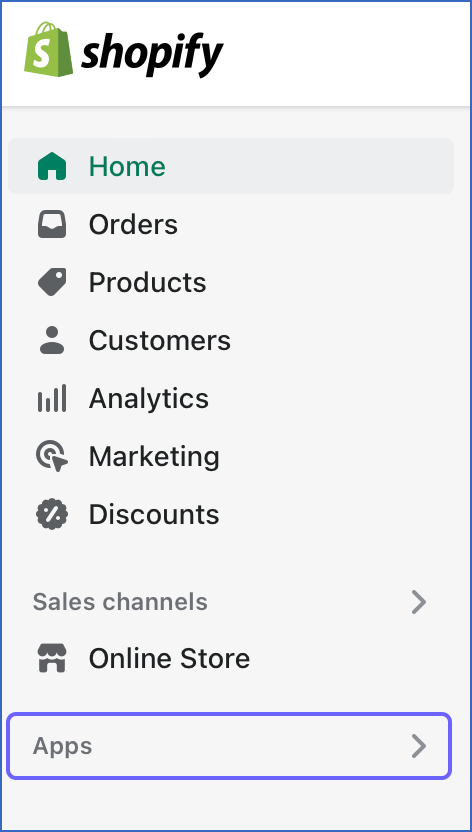

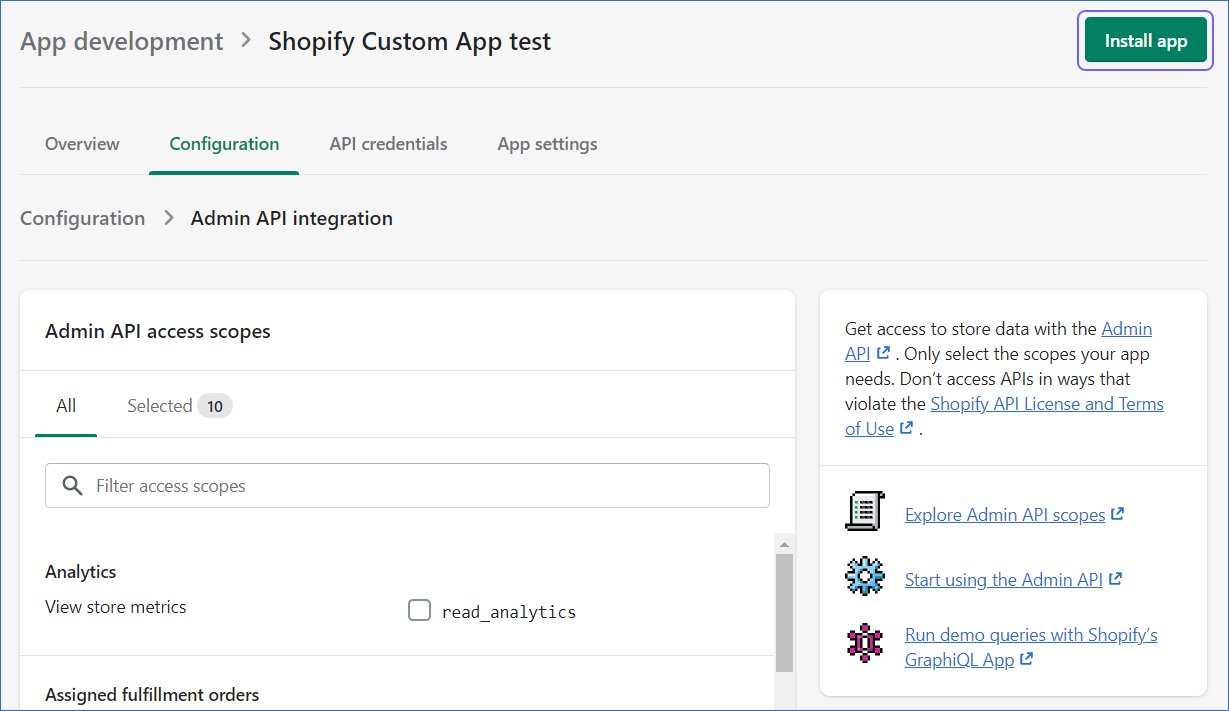

Shopify uses the concept of a custom app to allow access to store data for a merchant. These custom apps function exclusively for your Shopify store unlike public apps, which are built to work with many stores. The app is configured with the requisite Admin API scopes to fetch the different types of data from the store using Shopify’s REST APIs. You must install this app to view the API token, which is then used to set up a Pipeline in Hevo with Shopify as the Source.

The transfer of data from your Shopify store to the Destination location, therefore, comprises the following one-time setups:

-

Creating an app in Shopify.

-

Assigning permissions to the app to read and transform the data using Shopify’s Rest API.

-

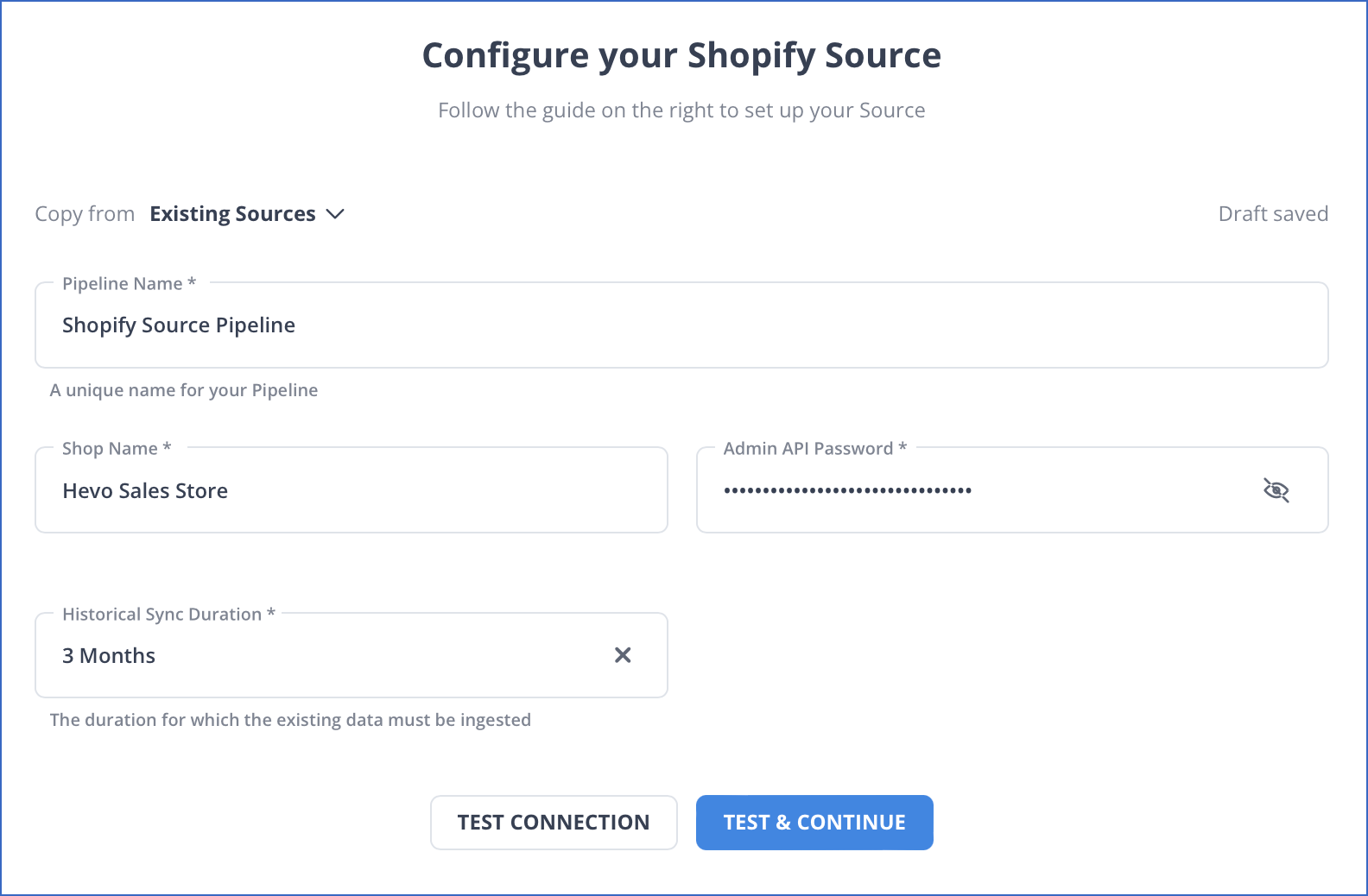

Creating a Pipeline in Hevo for transferring data from Shopify to the Destination database or data warehouse.

A Pipeline only transfers data to the specified Destination. You need to use appropriate tools for transforming the data for further analysis or for publishing it through your e-commerce portal. Read Models.

Limitations

-

OAuth authentication is not supported in private apps.

-

Hevo captures deletes only for the Product object. Only deletes after Release 1.85 are captured.

-

Hevo does not capture cascading deletes of the Product object. In Shopify, a Product object can have child objects, Product Image, and Product Variant. When a product is deleted in Shopify, the associated images and variants are deleted as well. However, Hevo captures the information of the deleted product only, and not the images and variants associated with it.

-

Hevo does not load data from a column into the Destination table if its size exceeds 16 MB, and skips the Event if it exceeds 40 MB. If the Event contains a column larger than 16 MB, Hevo attempts to load the Event after dropping that column’s data. However, if the Event size still exceeds 40 MB, then the Event is also dropped. As a result, you may see discrepancies between your Source and Destination data. To avoid such a scenario, ensure that each Event contains less than 40 MB of data.

See Also

Revision History

Refer to the following table for the list of key updates made to this page:

| Date | Release | Description of Change |

|---|---|---|

| Jul-07-2025 | NA | - Updated the Limitations section to inform about the max record and column size in an Event. - Updated section, Data Model to add information about behaviour of Balance Transaction object for older Pipelines. |

| Feb-24-2025 | 2.33.1 | - Added a warning container in page overview to mention about API upgrade. - Updated Schema and Primary Keys and Data Model as per API version 2024-10. |

| Jan-07-2025 | NA | Updated the Limitations section to add information on Event size. |

| Mar-05-2024 | 2.21 | Updated the ingestion frequency table in the Data Replication section. |

| Sep-11-2023 | NA | Added a warning container at the top of the page to mention about API migration. |

| Feb-20-2023 | NA | Updated section, Configuring Shopify App as a Source to update the information about historical sync duration. |

| Jan-23-2023 | 2.06 | - Updated section, Data Model with the two additional objects, Customer Journey Summary and Customer Visit, that Hevo now supports. - Updated section, Schema and Primary Keys to add the new ERD link with two additional objects. |

| Dec-07-2022 | NA | Updated section, Create an App in Shopify according to the latest Shopify UI. |

| Oct-17-2022 | 1.99 | Updated the section, Data Model with information about the new objects that Hevo ingests. |

| Jul-27-2022 | NA | Updated Note in section, Data Replication. |

| May-23-2022 | NA | Updated sections, Create an App in Shopify and Configure API Permissions in Shopify to include information about custom apps in Shopify. |

| Apr-11-2022 | 1.86 | Added a note in section, Data Replication to inform about optimized quota consumption for Full Load objects. |

| Apr-11-2022 | 1.85 | - Updated the section, Data Replication to add information about handling of deletes for the Product object. - Added limitations about capturing deletes. |

| Jan-24-2022 | 1.80 | Added information about configurable historical sync duration in the Data Replication section. |

| Oct-25-2021 | NA | Added the Pipeline frequency information in the Data Replication section. |

| Sep-09-2021 | 1.71 | Updated the section, Data Model to mention the new objects that Hevo now ingests. |

| Jul-12-2021 | 1.67 | Updated the Data Model section with additional objects that Hevo now supports and merged the Appendix into it. |

| Jun-14-2021 | 1.65 | Updated the default historical load duration to one year in the Data Replication section and suggested the Change Position option to fetch Events beyond or more recent than one year. |