Google Sheets

On This Page

User account-based authentication is no longer supported for the Google Sheets Source. We recommend that you migrate your existing Pipelines to a service account for uninterrupted data replication. Read Migrating User Account-Based Pipelines to Service Account for the steps to do this.

Hevo can replicate your Google Sheets data to your Destination using Google’s Sheets API.

User Authentication

You can connect to the Google Sheets Source only via service accounts. One service account can be mapped to your entire team. Read Google Account Authentication Methods to know how to set up a service account if you do not already have one.

Pipeline Behavior

-

Values in the first row of the Sheet are treated as field headers. These headers need to be unique. Read Treatment of Duplicate Column Headers.

If a header is missing, then the column name is used as the header.

-

Renaming your Sheet does not cause your Pipeline to break.

-

Row number is used as a primary key which is represented by a field called

__hevo_idin your Destination table. Read Treatment of Duplicate Column Headers. -

Cells containing formulas or scientific numbers are loaded as their calculated value.

Example: 6.16E+12 is loaded as 6160000000000.

-

Search option while selecting your Sheets only works on prefixes.

-

The Pipeline supports only Google Sheets. For MS Excel files, please check out the Drive Source.

Treatment of Duplicate Column Headers

To identify each column in the Source uniquely during ingestion, Hevo renames any duplicate column headers that are found, as:

-

Columns with the same header: The column header is changed to lowercase and the column number is suffixed to it. For example, if there are three columns, test, test, and test in columns G, H, and K, respectively, these are changed to test_g, test_h, and test_k. The same happens for headers with space between them. For example, if there are three columns, Software Used, Software Used, and Software Used in columns H, I, and J, respectively, these are changed to software_used_h, software_used_i, and software_used_j.

-

Columns with same header but different case: All column headers are changed to lowercase and assigned a sequential, numeric suffix. For example, if there are three columns, test, Test, and tEst, these are changed to test, test_1, and test_2 during the Auto Mapping phase. The same happens for headers with space between them. For example, if there are two columns, Software Used and Software used, these are changed to software_used, and software_used_1.

-

Columns with __Hevo_id as the header: The column header is changed to __hevo_id, and the __hevo_id column that Hevo adds to keep track of offsets during ingestion is named as __hevo_id_1.

-

Columns with __hevo_id as the header: Hevo suffixes the column number to the column header. For example, if the __hevo_id_ column lies in column number B, it is renamed to __hevo_id_b. If the __hevo_id_b column is also present in the Source column list, the original __hevo_id column is named to __hevoid_b_b. This is done because Hevo adds the column, __hevo_id_ as a primary key to keep track of offsets during the ingestion process. Towards this purpose, any existing column with that name is renamed.

Source Considerations

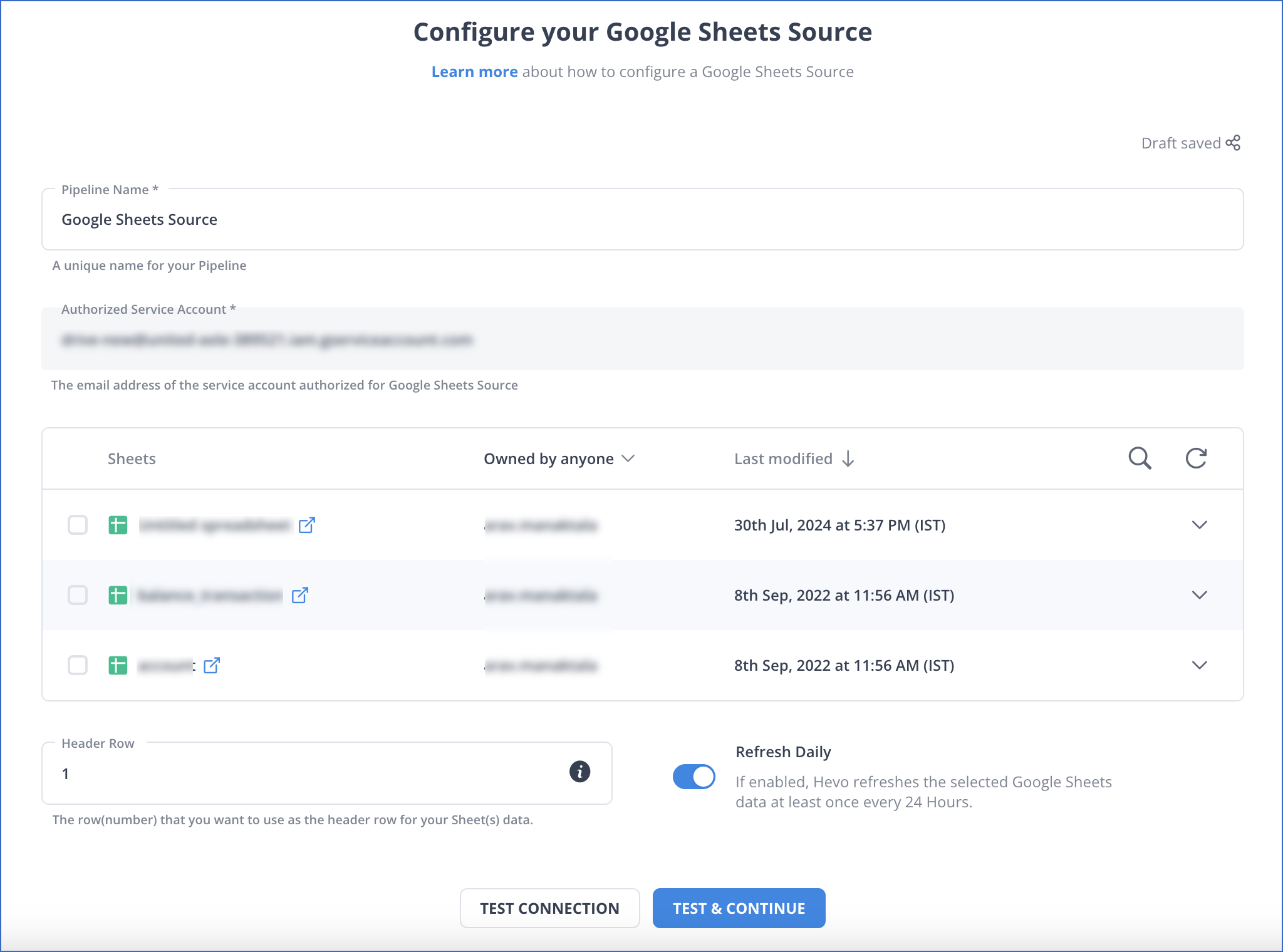

We recommend enabling the Refresh Daily option in the Source configuration if you are importing data from other worksheets into the Google Sheets selected for replication. At times, there can be a delay between the last_modified_at timestamp change and the data getting successfully imported. As a result, no new data may get ingested if Hevo polls for a last_modified_at time between this delay, as the import operation would still be incomplete but the timestamp-based offset would have been incremented. A daily refresh ensures all such data gets synchronized to the Destination successfully by re-ingesting all the data in the Google Sheets if no changes are detected for 24 hours. All Events ingested during data refresh count towards your Events quota consumption.

Billing Considerations

The first load of the historical data is free for Google Sheets. Subsequently, if any data changes in a Sheet at Source, the entire Sheet is re-ingested as there is no way to identify the change. All such subsequent loads count towards your Events quota consumption and are billed accordingly.

Limitations

-

The

hevo_marked_deletedcolumn is displayed in the Destination; however, Hevo does not capture the information for records deleted in your sheet(s). -

Hevo does not load data from a column into the Destination table if its size exceeds 16 MB, and skips the Event if it exceeds 40 MB. If the Event contains a column larger than 16 MB, Hevo attempts to load the Event after dropping that column’s data. However, if the Event size still exceeds 40 MB, then the Event is also dropped. As a result, you may see discrepancies between your Source and Destination data. To avoid such a scenario, ensure that each Event contains less than 40 MB of data.

See Also

Revision History

Refer to the following table for the list of key updates made to this page:

| Date | Release | Description of Change |

|---|---|---|

| Jul-07-2025 | NA | Updated the Limitations section to inform about the max record and column size in an Event. |

| Jan-07-2025 | NA | Updated the Limitations section to add information on Event size. |

| Aug-19-2024 | NA | Updated section, Configuring Google Sheets as a Source to remove mentions of user account-based authentication. |

| Jul-16-2024 | 2.25.2 | Updated section, Data Replication to change the default ingestion frequency to 12 Hrs. |

| Mar-05-2024 | 2.21 | Updated the ingestion frequency table in the Data Replication section. |

| Jul-20-2023 | NA | Added section, Limitations to add information about Hevo not capturing deletes. |

| Jun-26-2023 | 2.14 | Updated section, Configuring Google Sheets as a Source to add information about custom header row. |

| Apr-11-2022 | NA | Updated screenshot in the Configuring Google Sheets as a Source section to reflect the latest UI. |

| Feb-21-2022 | 1.82 | Added section, Treatment of Duplicate Column Headers. |

| Dec-06-2021 | 1.77 | - Added section, Source Considerations. - Updated section, Configuring Google Sheets as a Source to add information about data refresh. |

| Oct-25-2021 | NA | Added the Pipeline frequency information in the Data Replication section. |

| Sep-20-2021 | 1.72 | Added the section, Data Replication. |

| May-05-2021 | 1.62 | Added steps to connect to Google Sheets Source using a service account. |

| Apr-13-2021 | NA | Added the section, Billing Considerations. |