Resolving Incompatible Schema Mappings

On This Page

The Event Types and Events in the Source must be correctly mapped to the Destination tables and columns for the Pipeline to successfully load the Events from the Source into the selected Destination. For example, if a Source field of type String is mapped to a Destination field of type int, the value of the Source field is not guaranteed to be compatible with an int in all instances. For instance, a value like 5e9 cannot be transformed to a number and will fail. Read Schema Mapper Compatibility Table for the Source and Destination data types supported by Hevo.

If Hevo encounters any incompatibilities in the mappings between Source Events and the Destination tables and fields, it informs you about it in a number of ways.

Identifying Incompatible Schema Mapping

The count of the Event Types that have incompatible mapping is indicated on the Schema Mapper icon. In addition, a Warning icon is displayed next to the incorrectly mapped Event Types to inform users that there are unresolved issues in the schema mapping.

![]()

Click on any Event Type with a warning symbol to see the list of incompatible fields within it, in the right pane. The incompatibly mapped fields also have the Warning icon next to their name.

In the image below, the Source field is of type int while the mapped field in the Destination is of type timestamp, and therefore, a Warning icon is displayed.

You can hover over the Warning icon to view the details of the incompatibility and the courses of action available to you.

Resolving Mapping Errors

The warnings in Schema Mapper are meant to preemptively inform you about potentially incompatible mappings and help you identify and resolve them. If you are using an existing Destination table, you can do one of the following:

-

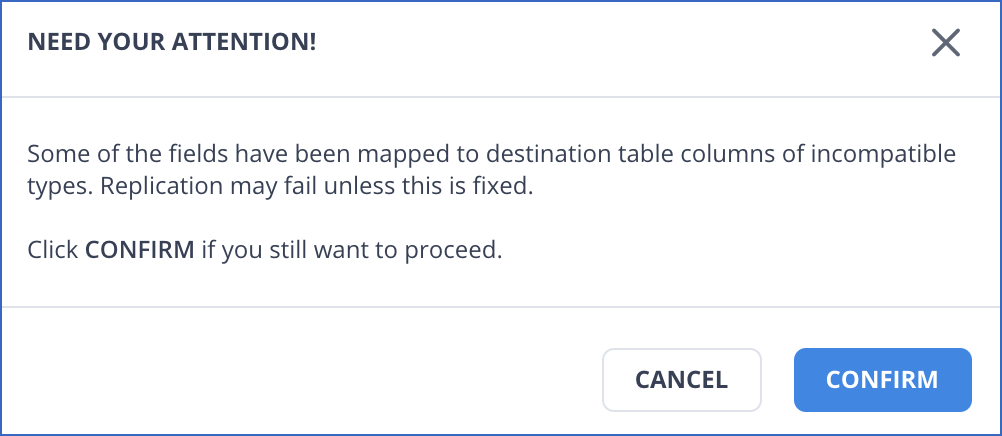

Proceed with these inconsistencies in the data as they are. For this, click APPLY CHANGES. Hevo displays a dialog box to confirm your action. Click CONFIRM to proceed.

-

Skip the Event that has warnings. Again, a warning is displayed that the data for the field would not be replicated to the Destination table and would be lost. Note: Hevo does not retain the data for the skipped field anywhere.

-

Select a compatible Destination field and type to fix the issue, and then click APPLY CHANGES.

Resolving Schema Mapping Incompatibilities with a New Table

If you choose to create a new Destination table during schema mapping, Hevo by default, creates the table columns of the correct and compatible field type. Unless you subsequently, manually select an incompatible field, no incompatibilities are expected in this scenario.

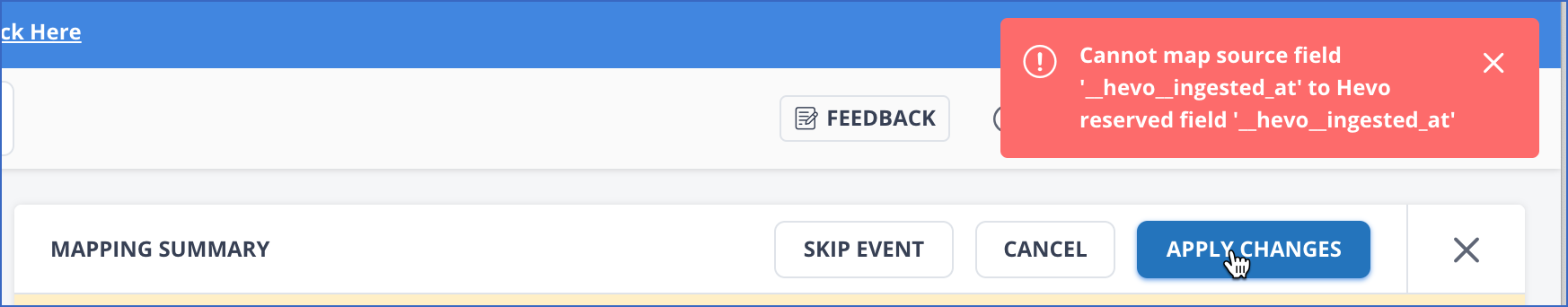

However, if any fields in the Source are reserved fields in Hevo, these cannot be mapped to the Destination table with the same name. For example, the field __hevo__ingested_at is a reserved field in Hevo. During table creation, this may get included as is in the Destination table creation and generate an error when you apply the mapping.

You need to modify the name in the mapped Destination field to something different, for example, __hevo__ingestion_time to resolve this issue. Since this is a manual step in an otherwise automated action, you need to explicitly confirm the change before it can be applied.

Handling Different Data Types in Source Data

During schema mapping, Hevo automatically promotes the data type of a Destination column, allowing it to accommodate maximum variations in the Source data. This helps Hevo load existing and new data losslessly into the Destination column and prevents any Events from being sidelined due to data type mismatches. However, if data type promotion is not possible, the related Source Events are sidelined, and an error is displayed.

For example, suppose a field in the first few Source Events contains values of data type, long. Based on this, Hevo creates a Destination column of type, long. Now, suppose the next few Events contain decimal values for the same field. In such a case, Hevo promotes the data type of the Destination column to decimal, which is of a larger precision and can accommodate both, long and decimal values.

Note: Hevo does not promote the data type of a field if it is a primary key.

This feature is currently available for the following data warehouse Destinations:

-

Amazon Redshift

-

Azure Synapse Analytics

-

Google BigQuery, where table size is less than 50 GB

-

Snowflake, where table size is less than 50 GB

Note: If the Google BigQuery or Snowflake table size increases beyond 50GB after such an alteration has been performed, any data type changes that are already factored in are easily accommodated, as the Destination table has the more relaxed data type now.

-

New teams created in or after Hevo Release 1.56 for Google BigQuery, Release 1.58 for Snowflake, and Release 1.60 for Amazon Redshift.

Note: Your Hevo release version is mentioned at the bottom of the Navigation Bar.

-

Pipelines where Auto Mapping is enabled.

Notes:

-

The column order might change when we convert column data types.

-

Any Models and Views that you may have created may get impacted if the Source data has multiple data types.

Revision History

Refer to the following table for the list of key updates made to this page:

| Date | Release | Description of Change |

|---|---|---|

| Mar-10-2023 | NA | Updated the section, Handling Different Data Types in Source Data to: - Reorganize content - Add Azure Synapse Analytics to the list of data warehouse Destinations. |

| Dec-10-2021 | NA | Updated the screenshots to reflect the latest UI. |

| Apr-06-2021 | 1.60 | Updated the Handling Different Data Types in Source Data section to include Amazon Redshift as a Destination where Hevo does datatype promotion. |