- Introduction

-

Getting Started

- Creating an Account in Hevo

- Subscribing to Hevo via AWS Marketplace

- Subscribing to Hevo via Snowflake Marketplace

- Connection Options

- Familiarizing with the UI

- Creating your First Pipeline

- Data Loss Prevention and Recovery

-

Data Ingestion

- Types of Data Synchronization

- Ingestion Modes and Query Modes for Database Sources

- Ingestion and Loading Frequency

- Data Ingestion Statuses

- Deferred Data Ingestion

- Handling of Primary Keys

- Handling of Updates

- Handling of Deletes

- Hevo-generated Metadata

- Best Practices to Avoid Reaching Source API Rate Limits

-

Edge

- Getting Started

- Data Ingestion

- Core Concepts

-

Pipelines

- Familiarizing with the Pipelines UI (Edge)

- Creating an Edge Pipeline

- Working with Edge Pipelines

- Object and Schema Management

- Pipeline Job History

- Sources

- Destinations

- Alerts

- Custom Connectors

-

Releases

- Edge Release Notes - February 18, 2026

- Edge Release Notes - February 10, 2026

- Edge Release Notes - February 03, 2026

- Edge Release Notes - January 20, 2026

- Edge Release Notes - December 08, 2025

- Edge Release Notes - December 01, 2025

- Edge Release Notes - November 05, 2025

- Edge Release Notes - October 30, 2025

- Edge Release Notes - September 22, 2025

- Edge Release Notes - August 11, 2025

- Edge Release Notes - July 09, 2025

- Edge Release Notes - November 21, 2024

-

Data Loading

- Loading Data in a Database Destination

- Loading Data to a Data Warehouse

- Optimizing Data Loading for a Destination Warehouse

- Deduplicating Data in a Data Warehouse Destination

- Manually Triggering the Loading of Events

- Scheduling Data Load for a Destination

- Loading Events in Batches

- Data Loading Statuses

- Data Spike Alerts

- Name Sanitization

- Table and Column Name Compression

- Parsing Nested JSON Fields in Events

-

Pipelines

- Data Flow in a Pipeline

- Familiarizing with the Pipelines UI

- Working with Pipelines

- Managing Objects in Pipelines

- Pipeline Jobs

-

Transformations

-

Python Code-Based Transformations

- Supported Python Modules and Functions

-

Transformation Methods in the Event Class

- Create an Event

- Retrieve the Event Name

- Rename an Event

- Retrieve the Properties of an Event

- Modify the Properties for an Event

- Fetch the Primary Keys of an Event

- Modify the Primary Keys of an Event

- Fetch the Data Type of a Field

- Check if the Field is a String

- Check if the Field is a Number

- Check if the Field is Boolean

- Check if the Field is a Date

- Check if the Field is a Time Value

- Check if the Field is a Timestamp

-

TimeUtils

- Convert Date String to Required Format

- Convert Date to Required Format

- Convert Datetime String to Required Format

- Convert Epoch Time to a Date

- Convert Epoch Time to a Datetime

- Convert Epoch to Required Format

- Convert Epoch to a Time

- Get Time Difference

- Parse Date String to Date

- Parse Date String to Datetime Format

- Parse Date String to Time

- Utils

- Examples of Python Code-based Transformations

-

Drag and Drop Transformations

- Special Keywords

-

Transformation Blocks and Properties

- Add a Field

- Change Datetime Field Values

- Change Field Values

- Drop Events

- Drop Fields

- Find & Replace

- Flatten JSON

- Format Date to String

- Format Number to String

- Hash Fields

- If-Else

- Mask Fields

- Modify Text Casing

- Parse Date from String

- Parse JSON from String

- Parse Number from String

- Rename Events

- Rename Fields

- Round-off Decimal Fields

- Split Fields

- Examples of Drag and Drop Transformations

- Effect of Transformations on the Destination Table Structure

- Transformation Reference

- Transformation FAQs

-

Python Code-Based Transformations

-

Schema Mapper

- Using Schema Mapper

- Mapping Statuses

- Auto Mapping Event Types

- Manually Mapping Event Types

- Modifying Schema Mapping for Event Types

- Schema Mapper Actions

- Fixing Unmapped Fields

- Resolving Incompatible Schema Mappings

- Resizing String Columns in the Destination

- Changing the Data Type of a Destination Table Column

- Schema Mapper Compatibility Table

- Limits on the Number of Destination Columns

- File Log

- Troubleshooting Failed Events in a Pipeline

- Mismatch in Events Count in Source and Destination

- Audit Tables

- Activity Log

-

Pipeline FAQs

- Can multiple Sources connect to one Destination?

- What happens if I re-create a deleted Pipeline?

- Why is there a delay in my Pipeline?

- Can I change the Destination post-Pipeline creation?

- Why is my billable Events high with Delta Timestamp mode?

- Can I drop multiple Destination tables in a Pipeline at once?

- How does Run Now affect scheduled ingestion frequency?

- Will pausing some objects increase the ingestion speed?

- Can I see the historical load progress?

- Why is my Historical Load Progress still at 0%?

- Why is historical data not getting ingested?

- How do I set a field as a primary key?

- How do I ensure that records are loaded only once?

- Events Usage

-

Sources

- Free Sources

-

Databases and File Systems

- Data Warehouses

-

Databases

- Connecting to a Local Database

- Amazon DocumentDB

- Amazon DynamoDB

- Elasticsearch

-

MongoDB

- Generic MongoDB

- MongoDB Atlas

- Support for Multiple Data Types for the _id Field

- Example - Merge Collections Feature

-

Troubleshooting MongoDB

-

Errors During Pipeline Creation

- Error 1001 - Incorrect credentials

- Error 1005 - Connection timeout

- Error 1006 - Invalid database hostname

- Error 1007 - SSH connection failed

- Error 1008 - Database unreachable

- Error 1011 - Insufficient access

- Error 1028 - Primary/Master host needed for OpLog

- Error 1029 - Version not supported for Change Streams

- SSL 1009 - SSL Connection Failure

- Troubleshooting MongoDB Change Streams Connection

- Troubleshooting MongoDB OpLog Connection

-

Errors During Pipeline Creation

- SQL Server

-

MySQL

- Amazon Aurora MySQL

- Amazon RDS MySQL

- Azure MySQL

- Generic MySQL

- Google Cloud MySQL

- MariaDB MySQL

-

Troubleshooting MySQL

-

Errors During Pipeline Creation

- Error 1003 - Connection to host failed

- Error 1006 - Connection to host failed

- Error 1007 - SSH connection failed

- Error 1011 - Access denied

- Error 1012 - Replication access denied

- Error 1017 - Connection to host failed

- Error 1026 - Failed to connect to database

- Error 1027 - Unsupported BinLog format

- Failed to determine binlog filename/position

- Schema 'xyz' is not tracked via bin logs

- Errors Post-Pipeline Creation

-

Errors During Pipeline Creation

- MySQL FAQs

- Oracle

-

PostgreSQL

- Amazon Aurora PostgreSQL

- Amazon RDS PostgreSQL

- Azure PostgreSQL

- Generic PostgreSQL

- Google Cloud PostgreSQL

- Heroku PostgreSQL

-

Troubleshooting PostgreSQL

-

Errors during Pipeline creation

- Error 1003 - Authentication failure

- Error 1006 - Connection settings errors

- Error 1011 - Access role issue for logical replication

- Error 1012 - Access role issue for logical replication

- Error 1014 - Database does not exist

- Error 1017 - Connection settings errors

- Error 1023 - No pg_hba.conf entry

- Error 1024 - Number of requested standby connections

- Errors Post-Pipeline Creation

-

Errors during Pipeline creation

-

PostgreSQL FAQs

- Can I track updates to existing records in PostgreSQL?

- How can I migrate a Pipeline created with one PostgreSQL Source variant to another variant?

- How can I prevent data loss when migrating or upgrading my PostgreSQL database?

- Why do FLOAT4 and FLOAT8 values in PostgreSQL show additional decimal places when loaded to BigQuery?

- Why is data not being ingested from PostgreSQL Source objects?

- Troubleshooting Database Sources

- Database Source FAQs

- File Storage

- Engineering Analytics

- Finance & Accounting Analytics

-

Marketing Analytics

- ActiveCampaign

- AdRoll

- Amazon Ads

- Apple Search Ads

- AppsFlyer

- CleverTap

- Criteo

- Drip

- Facebook Ads

- Facebook Page Insights

- Firebase Analytics

- Freshsales

- Google Ads

- Google Analytics 4

- Google Analytics 360

- Google Play Console

- Google Search Console

- HubSpot

- Instagram Business

- Klaviyo v2

- Lemlist

- LinkedIn Ads

- Mailchimp

- Mailshake

- Marketo

- Microsoft Ads

- Onfleet

- Outbrain

- Pardot

- Pinterest Ads

- Pipedrive

- Recharge

- Segment

- SendGrid Webhook

- SendGrid

- Salesforce Marketing Cloud

- Snapchat Ads

- SurveyMonkey

- Taboola

- TikTok Ads

- Twitter Ads

- Typeform

- YouTube Analytics

- Product Analytics

- Sales & Support Analytics

- Source FAQs

-

Destinations

- Familiarizing with the Destinations UI

- Cloud Storage-Based

- Databases

-

Data Warehouses

- Amazon Redshift

- Amazon Redshift Serverless

- Azure Synapse Analytics

- Databricks

- Google BigQuery

- Hevo Managed Google BigQuery

- Snowflake

- Troubleshooting Data Warehouse Destinations

-

Destination FAQs

- Can I change the primary key in my Destination table?

- Can I change the Destination table name after creating the Pipeline?

- How can I change or delete the Destination table prefix?

- Why does my Destination have deleted Source records?

- How do I filter deleted Events from the Destination?

- Does a data load regenerate deleted Hevo metadata columns?

- How do I filter out specific fields before loading data?

- Transform

- Alerts

- Account Management

- Activate

- Glossary

-

Releases- Release 2.46 (Feb 23-Mar 09, 2026)

- Release 2.45 (Jan 12-Feb 09, 2026)

-

2025 Releases

- Release 2.44 (Dec 01, 2025-Jan 12, 2026)

- Release 2.43 (Nov 03-Dec 01, 2025)

- Release 2.42 (Oct 06-Nov 03, 2025)

- Release 2.41 (Sep 08-Oct 06, 2025)

- Release 2.40 (Aug 11-Sep 08, 2025)

- Release 2.39 (Jul 07-Aug 11, 2025)

- Release 2.38 (Jun 09-Jul 07, 2025)

- Release 2.37 (May 12-Jun 09, 2025)

- Release 2.36 (Apr 14-May 12, 2025)

- Release 2.35 (Mar 17-Apr 14, 2025)

- Release 2.34 (Feb 17-Mar 17, 2025)

- Release 2.33 (Jan 20-Feb 17, 2025)

-

2024 Releases

- Release 2.32 (Dec 16 2024-Jan 20, 2025)

- Release 2.31 (Nov 18-Dec 16, 2024)

- Release 2.30 (Oct 21-Nov 18, 2024)

- Release 2.29 (Sep 30-Oct 22, 2024)

- Release 2.28 (Sep 02-30, 2024)

- Release 2.27 (Aug 05-Sep 02, 2024)

- Release 2.26 (Jul 08-Aug 05, 2024)

- Release 2.25 (Jun 10-Jul 08, 2024)

- Release 2.24 (May 06-Jun 10, 2024)

- Release 2.23 (Apr 08-May 06, 2024)

- Release 2.22 (Mar 11-Apr 08, 2024)

- Release 2.21 (Feb 12-Mar 11, 2024)

- Release 2.20 (Jan 15-Feb 12, 2024)

-

2023 Releases

- Release 2.19 (Dec 04, 2023-Jan 15, 2024)

- Release Version 2.18

- Release Version 2.17

- Release Version 2.16 (with breaking changes)

- Release Version 2.15 (with breaking changes)

- Release Version 2.14

- Release Version 2.13

- Release Version 2.12

- Release Version 2.11

- Release Version 2.10

- Release Version 2.09

- Release Version 2.08

- Release Version 2.07

- Release Version 2.06

-

2022 Releases

- Release Version 2.05

- Release Version 2.04

- Release Version 2.03

- Release Version 2.02

- Release Version 2.01

- Release Version 2.00

- Release Version 1.99

- Release Version 1.98

- Release Version 1.97

- Release Version 1.96

- Release Version 1.95

- Release Version 1.93 & 1.94

- Release Version 1.92

- Release Version 1.91

- Release Version 1.90

- Release Version 1.89

- Release Version 1.88

- Release Version 1.87

- Release Version 1.86

- Release Version 1.84 & 1.85

- Release Version 1.83

- Release Version 1.82

- Release Version 1.81

- Release Version 1.80 (Jan-24-2022)

- Release Version 1.79 (Jan-03-2022)

-

2021 Releases

- Release Version 1.78 (Dec-20-2021)

- Release Version 1.77 (Dec-06-2021)

- Release Version 1.76 (Nov-22-2021)

- Release Version 1.75 (Nov-09-2021)

- Release Version 1.74 (Oct-25-2021)

- Release Version 1.73 (Oct-04-2021)

- Release Version 1.72 (Sep-20-2021)

- Release Version 1.71 (Sep-09-2021)

- Release Version 1.70 (Aug-23-2021)

- Release Version 1.69 (Aug-09-2021)

- Release Version 1.68 (Jul-26-2021)

- Release Version 1.67 (Jul-12-2021)

- Release Version 1.66 (Jun-28-2021)

- Release Version 1.65 (Jun-14-2021)

- Release Version 1.64 (Jun-01-2021)

- Release Version 1.63 (May-19-2021)

- Release Version 1.62 (May-05-2021)

- Release Version 1.61 (Apr-20-2021)

- Release Version 1.60 (Apr-06-2021)

- Release Version 1.59 (Mar-23-2021)

- Release Version 1.58 (Mar-09-2021)

- Release Version 1.57 (Feb-22-2021)

- Release Version 1.56 (Feb-09-2021)

- Release Version 1.55 (Jan-25-2021)

- Release Version 1.54 (Jan-12-2021)

-

2020 Releases

- Release Version 1.53 (Dec-22-2020)

- Release Version 1.52 (Dec-03-2020)

- Release Version 1.51 (Nov-10-2020)

- Release Version 1.50 (Oct-19-2020)

- Release Version 1.49 (Sep-28-2020)

- Release Version 1.48 (Sep-01-2020)

- Release Version 1.47 (Aug-06-2020)

- Release Version 1.46 (Jul-21-2020)

- Release Version 1.45 (Jul-02-2020)

- Release Version 1.44 (Jun-11-2020)

- Release Version 1.43 (May-15-2020)

- Release Version 1.42 (Apr-30-2020)

- Release Version 1.41 (Apr-2020)

- Release Version 1.40 (Mar-2020)

- Release Version 1.39 (Feb-2020)

- Release Version 1.38 (Jan-2020)

- Early Access New

Google Analytics 4

Google Analytics 4 (GA 4) is the latest version of Google Analytics. It allows in-depth assessment of user experiences across your websites and applications using reports. Each of these websites and apps is referred to as GA 4 property and has a unique tracking ID, which enables the monitoring and analysis of activities associated with the respective property.

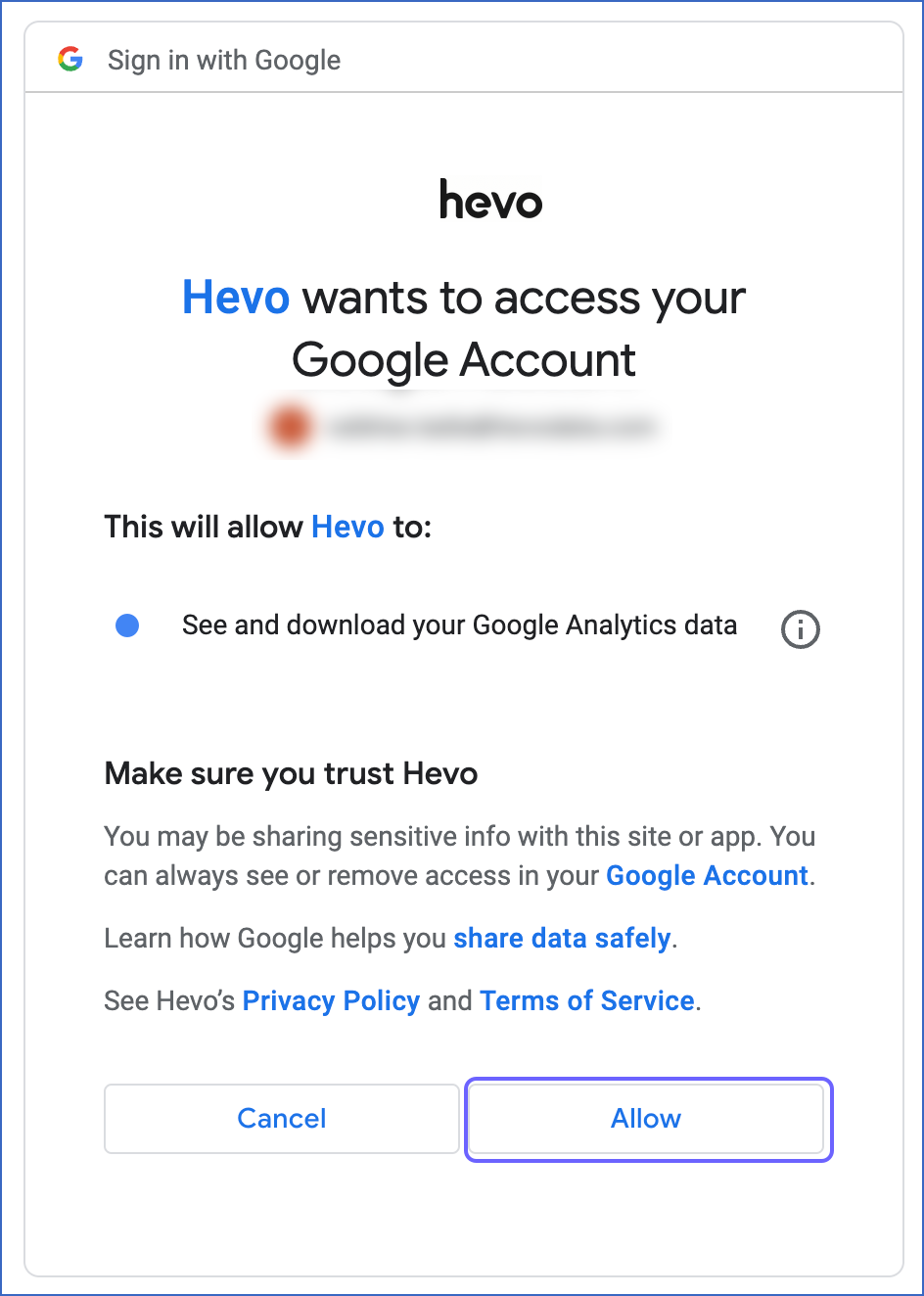

Hevo uses the Google Analytics Data API (GA 4) to ingest data from your GA 4 account. The API provides a specific set of fields, which may differ from those available in other export methods or analytics tools, such as BigQuery Export. You must allow Hevo to access data from your Google account to ingest data.

Prerequisites

-

An active Google Analytics 4 (GA 4) account from which data is to be ingested exists.

-

You are assigned the Team Administrator, Team Collaborator, or Pipeline Administrator role in Hevo to create the Pipeline.

Configuring the Google Analytics 4 Property

If you are an existing user of Google Analytics, you must configure the GA 4 property in your Google Analytics account before configuring GA 4 as a Source in Hevo.

If you are a new user without an existing Google Analytics account, skip to Configuring Google Analytics 4 as a Source.

Note: Read Configuring the Google Analytics 4 Property for your Firebase Account (Optional) if you want to configure GA 4 property in your existing Firebase account.

Configuring Google Analytics 4 as a Source

Perform the following steps to configure GA 4 as the Source in Hevo:

-

Click PIPELINES in the Navigation Bar.

-

Click + Create Pipeline in the Pipelines List View.

-

On the Select Source Type page, select Google Analytics 4.

-

On the Select Destination Type page, select the type of Destination you want to use.

-

On the Configure your Google Analytics 4 account page, do one of the following:

-

Select a previously configured account and click Continue.

-

Click + Add Google Analytics 4 Account and perform the following steps to configure an account:

-

Select your linked Google account.

-

Click Continue.

-

Click Allow to grant Hevo access to your analytics data.

-

-

-

On the Configure your Google Analytics 4 Source page, specify the following:

-

Pipeline Name: A unique name for your Pipeline, not exceeding 255 characters.

-

Authorized User/Service Account (Non-editable): The email address that you selected earlier when connecting to your Google account. This value is pre-filled.

-

GA4 Account Name: The GA 4 account from which you want to replicate the data. One Google account can contain multiple analytics accounts.

-

Property Name: The website or app from which you want Hevo to read the user data. This field appears once you select the GA4 Account Name from the drop-down.

-

Historical Sync Duration: The duration for which you want to ingest the existing data from the Source. Default duration: 6 Months.

-

In the Report section:

-

Report Name: A unique name for your report, not exceeding 30 characters.

-

Dimensions: The attributes for which you want to see the data in your report. For example, in a Website property, the dimensions can include city, country, and device category. Read Analytics Dimensions and Metrics to know more about the dimensions available in GA 4.

Note: Date is a mandatory dimension.

-

Metrics: The numerical measurement of data as per the dimensions selected above. For example, in a Website property, the metrics can include the number of viewers, new sign-ups, and number of clicks. Read Analytics Dimensions and Metrics to know more about the metrics available in GA 4.

Note: You can use the GA4 Dimensions and Metrics Explorer to check the compatibility of the selected dimensions and metrics, and then validate the query generated with them using the GA4 Query Explorer.

-

Pivot Report: If enabled, Hevo creates additional reports by rearranging the data with a subset of dimensions and metrics from the above report. Default setting: Disabled.

-

Pivot Dimensions: The subset of dimensions from the parent report for which you want to rearrange the data.

Note: Date is a mandatory dimension.

-

Pivot Metrics: The subset of metrics from the parent report for which you want to rearrange the data.

-

Pivot Aggregation Function: The aggregation function you want to use to rearrange the data.

-

-

Advanced Options: The conditions to filter the data from the report based on your business requirement.

-

Click Add New Metric Clause + to add a metric filter clause.

-

In the Metric Filter Clauses section:

-

Logical Operator: Defines how multiple conditions within a metric filter clause are combined. For example, if you select And, all conditions must be true for a record to be included. If you select Or, a record meeting any one condition will be included. Default operator: Or.

-

Include or Exclude: The option to include or exclude records from the report that meet the filter conditions.

-

Metric: The specific metric to filter data from the report.

-

Comparison operator: The type of comparison to filter the metric values against the number you provide. Default operator: Numeric Equal.

-

Comparison Value: The numeric value to compare against the metric values. For example, if you set this to 100 with the comparison operator Numeric Equal, the filter includes only records where the metric value is 100.

Click ADD METRIC + to add another condition within the metric filter clause.

-

-

-

Click Add New Dimension Clause + to add a dimension filter clause.

-

In the Dimension Filter Clauses section:

-

Logical Operator: Defines how multiple conditions within a dimension filter clause are combined. Default operator: Or.

-

Include or Exclude: The option to include or exclude records from the report that meet the filter conditions.

-

Dimension: The specific dimension to filter data from the report.

-

Comparison operator: The type of comparison to filter the dimension values against the expression you provide. Default operator: Regexp.

-

Expression: The text value or regular expression to compare against the dimension values. For example, if you set the expression

^Newwith the operator Regexp, the filter includes only records where the dimension value starts with New, such as New York or New Delhi. -

Case Sensitive: If selected, the above expression is treated as case-sensitive.

Click ADD DIMENSION + to add another condition within the metric filter clause.

-

-

-

-

-

-

(Optional) Click + ADD ANOTHER REPORT to add up to five reports.

-

Click Test & Continue.

-

Proceed to configuring the data ingestion and setting up the Destination.

Data Replication

| For Teams Created | Default Ingestion Frequency | Minimum Ingestion Frequency | Maximum Ingestion Frequency | Custom Frequency Range (in Hrs) |

|---|---|---|---|---|

| Before Release 2.21 | 1 Hr | 15 Mins | 12 Hrs | 1-12 |

| After Release 2.21 | 6 Hrs | 30 Mins | 24 Hrs | 1-24 |

Note: The custom frequency must be set in hours as an integer value. For example, 1, 2, or 3, but not 1.5 or 1.75.

-

Historical Data: The first run of the Pipeline ingests historical data for the selected reports on the basis of the historical sync duration specified at the time of creating the Pipeline and loads it to the Destination. Default duration: 6 Months.

-

Incremental Data: Once the historical data ingestion is complete, every subsequent run of the Pipeline fetches new and updated data for the reports as per the ingestion frequency.

Note: Hevo retrieves the incremental data for the reports in a single run of the Pipeline by creating batches of data rather than calling the API for each report separately. This is done to reduce the number of calls made to the GA 4 API.

-

Data Refresh: Hevo re-ingests the data daily for the past three days.

Schema and Primary Keys

-

Hevo uses the dimensions in a report as primary keys.

-

To represent pivot reports, Hevo adds the suffix

_pivotto the report name. -

The

aggregation_functioncolumn in the pivot report contains sum, minimum, maximum, or count values.For example, if you want to check the maximum page views for each page on your website daily, the count value represents the number of page views, and the maximum value represents the maximum page views.

Additional Information

Read the detailed Hevo documentation for the following related topics:

Source Considerations

-

To fetch data for the Interests, User Age, and Gender dimensions, you must:

-

Each report can include a maximum of 9 dimensions and 10 metrics.

Limitations

-

Hevo does not support the ingestion of cohort dimensions and metrics.

-

Hevo might take more than 24 hours to fetch accurate data for some reports because of the GA 4 data freshness intervals. Therefore, data replication might not be accurate, leading to discrepancies in the Destination. To avoid this, you can add a clause and exclude dimensions with null values while configuring the Source.

-

Hevo does not load data from a column into the Destination table if its size exceeds 16 MB, and skips the Event if it exceeds 40 MB. If the Event contains a column larger than 16 MB, Hevo attempts to load the Event after dropping that column’s data. However, if the Event size still exceeds 40 MB, then the Event is also dropped. As a result, you may see discrepancies between your Source and Destination data. To avoid such a scenario, ensure that each Event contains less than 40 MB of data.

See Also

Revision History

Refer to the following table for the list of key updates made to this page:

| Date | Release | Description of Change |

|---|---|---|

| Nov-06-2025 | NA | Updated the document as per the latest Hevo UI. |

| Oct-29-2025 | NA | Updated section, Configuring Google Analytics 4 as a Source to add description for the advanced options. |

| Sep-18-2025 | NA | Updated section, Configuring Google Analytics 4 as a Source as per the latest UI. |

| Jul-18-2025 | NA | Updated section, Configuring Google Analytics 4 as a Source to add a note about GA4 Query Explorer. |

| Jul-07-2025 | NA | Updated the Limitations section to inform about the max record and column size in an Event. |

| Jun-13-2025 | NA | Updated the Source Considerations section to add a limitation about GA4 reports. |

| Mar-21-2025 | NA | Updated section, Configuring Google Analytics 4 as a Source to add note about the Date dimension. |

| Jan-07-2025 | NA | Updated the Limitations section to add information on Event size. |

| Mar-05-2024 | 2.21 | Updated the ingestion frequency table in the Data Replication section. |

| Aug-28-2023 | NA | Updated the page as per the latest Hevo functionality. |

| Jul-12-2023 | NA | Updated section, Limitations to add information about GA4 data freshness intervals. |

| Apr-25-2023 | NA | Updated the page to move information about Firebase Analytics to the Firebase Analytics page. |

| Apr-04-2023 | NA | Updated section, Configuring Google Analytics 4 as a Source for more clarity. |

| Sep-05-2022 | NA | Updated section, Data Replication to reorganize the content for better understanding and coherence. |

| Oct-25-2021 | NA | Added the Pipeline frequency information in the Data Replication section. |

| Jul-26-2021 | NA | Added the section, Source Considerations. |

| Jun-28-2021 | 1.66 | New document. |